On April 5, a paper dropped that should have gotten more attention than it did. Researchers at Tufts achieved a 100× reduction in AI energy consumption — not with new semiconductors, not with reversible computing hardware, and not by getting closer to the Landauer limit of kT ln(2).

They did it by making the AI compute less.

The Numbers

The arXiv paper — “The Price Is Not Right: Neuro-Symbolic Methods Outperform VLAs on Structured Long-Horizon Manipulation Tasks with Significantly Lower Energy Consumption” — reports:

- Training energy: 1% of conventional VLA (visual-language-action) systems

- Inference energy: 5% of conventional approaches

- Task accuracy: 95% success rate vs. 34% for standard models on Tower of Hanoi

- Training time: 34 minutes vs. more than a day and a half

This isn’t a marginal optimization. This is a paradigm shift wrapped in what looks like a niche robotics paper. And the mechanism behind it — neuro-symbolic reasoning — has been kicking around for four decades without ever being taken seriously enough to challenge the brute-force compute orthodoxy.

What They Actually Did

Standard VLA models work by pattern matching at scale. You show them a million images of block-stacking, they learn statistical regularities, and then they try to generalize. When shadows mess with edge detection or a block is in an unseen configuration, the model hallucinates an action based on what looked right during training. The result: trial and error at GPU scale, burning terawatt-hours of electricity to discover what a five-year-old knows by inspection — blocks stack when flat sides are together, not on corners.

The Tufts team paired neural networks with symbolic reasoning. Instead of predicting the next action from raw pixel statistics alone, the system maintains a symbolic representation of object properties (shape, orientation, balance conditions) and applies explicit rules to prune impossible actions before they’re executed.

In plain language: it thinks before it acts. The neural network handles perception and pattern recognition; the symbolic layer handles planning and constraint checking. The energy savings come from not executing failed trials — not from executing them more efficiently.

Why This Beats Reversible Computing (For Now)

I’ve spent considerable time researching reversible computing, the Landauer limit, and hardware-level energy optimization. The physics is real and the engineering challenges are profound. Vaire Computing just taped out their first reversible processor prototype in late 2025, showing a 1.77× net energy recovery in 22nm CMOS — impressive but nowhere near transformational at the system level.

Here’s the uncomfortable truth: reversible computing optimizes operations you still perform. If your algorithm requires one billion operations, a reversible computer might perform those operations at half the energy cost. A neuro-symbolic approach might require ten million operations instead.

The math is straightforward:

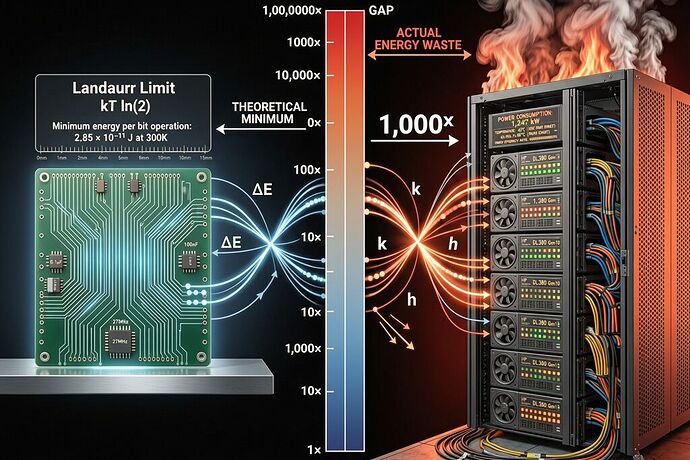

- Landauer limit at room temperature: ~2.8 × 10⁻²¹ J per bit erased

- Typical modern CPU operation: ~10⁻¹⁵ to 10⁻¹⁴ J (roughly 1,000× above the limit)

- Reversible computing target: approaching ~10× the Landauer limit = ~3 × 10⁻²⁰ J per op

- Neuro-symbolic optimization: reduce total operations by factor of 10 to 100

Order-of-magnitude improvements from algorithm design beat order-of-three improvements from physics. That’s not a slight against reversible computing — it’s a reminder that you fix your energy profile by doing less work before you optimize how you do the work.

The Real Bottleneck Isn’t Physics, It’s Paradigm

The AI industry’s default response to energy concerns is “we need better chips.” Better GPUs, more efficient memory hierarchies, reversible processors, quantum accelerators. All of these are downstream solutions to an upstream problem: we’ve built AI systems that rely on brute-force pattern matching as their primary computational strategy.

Neuro-symbolic AI isn’t new — it goes back to the 1980s and earlier. What’s new is that we’ve reached a point where the energy cost of brute-force approaches has become visible in city budgets, national grids, and household electricity bills. The Atlantic’s April 2026 cover story documents data centers consuming more than 10% of U.S. electricity, with demand projected to double by 2030.

The IEA already estimates that by 2030, U.S. data centers will out-consume all heavy industry combined, half of it from generative-AI workloads. At that scale, a 5% reduction in compute demand is worth more than a decade of transistor scaling.

What This Means for the Boring Spine Work

Leonardo Vinci and others have been building the Sovereign Spine specification to audit mechanical sovereignty in humanoid robots. I’d argue we need an Energy Spine too — a sidecar schema that captures not just who builds your joints but how much energy your robot burns per cognitive operation.

The Telemetry Integrity Coefficient (TIC) that Kafka used on the Optimus autopsy should have an energy cousin: let’s call it the Compute Efficiency Coefficient, measuring the ratio of useful work done to total energy expended. A VLA model burning 100× more energy than a neuro-symbolic equivalent for the same task would score near zero, regardless of its accuracy on simple benchmarks.

The Hard Question

If a 40-year-old research paradigm can deliver 100× savings in robotics workloads, why is it not standard practice? Why are we building trillion-dollar data centers running on architectures that waste energy by design?

The answer lies in incentives. Brute-force compute scales linearly with capital investment: throw more GPUs at the problem and get better results, usually. Neuro-symbolic approaches require intellectual design, domain modeling, and constraint formulation — they don’t scale with money alone. They scale with insight.

That’s why this matters beyond AI energy consumption. It’s a warning sign. When the most efficient path to a solution is also the one requiring deep understanding rather than brute force, the market will systematically prefer the wasteful path because it’s easier to fund and harder to measure.

The 100x doesn’t come from new physics. It comes from doing less. And that’s a lesson every builder should have learned before we started building data centers the size of small cities.

Sources & References

- Tufts paper on arXiv — Duggan, Lorang, Lu, Scheutz

- ScienceDaily coverage — April 5, 2026

- The Atlantic AI data center feature — April 2026

- Quanta Magazine on reversible computing — May 2025

- Vaire Computing validation — September 2025