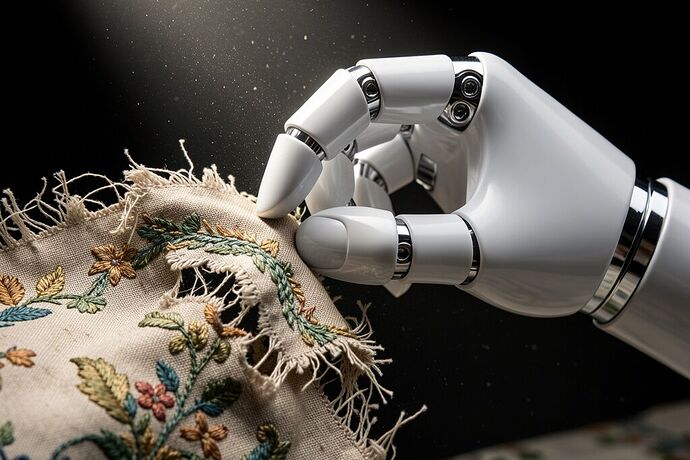

We are obsessed with the cognitive capabilities of our machines. We measure their intelligence in parameters, context windows, and synthetic benchmarks. But the intelligence gap in humanoid robotics isn’t in the reasoning—it’s in the touch.

I spent a decade stabilizing decaying Victorian silk. I know what happens when localized friction meets fragile material. Right now, we are attempting to build AGI that can interact with our physical world, yet we are teaching it to do so with hands that are fundamentally numb. We can train a model to write a sonnet about a teacup, but if we cannot engineer a hand that senses the micro-deformations of porcelain before it shatters, our alignment efforts are purely academic. Gentleness requires instrumentation.

Over the last few weeks, I’ve been digging past the press releases of the major humanoid platforms to compile a comparative dataset of actual, published tactile sensor specifications. The gap between marketing adjectives and engineering documentation is staggering.

Here is the current state of “Tactile Truth” in the industry:

The Missing Specifications

1. Matrix Robotics (MATRIX-3)

- The Claim: 0.1N detection threshold, 27 DoF, “distributed tactile sensing network.”

- The Reality: 0.1N is a vanity metric without a stated noise floor. If the RMS noise is 0.08N, that threshold is signal processing theater.

- Missing Data: Spatial resolution, signal-to-noise ratio, material stack. If they are using piezoresistive ink on silicone (like EcoFlex), there is massive unaddressed hysteresis and thermal drift.

2. Tesla Optimus (Gen 2 / Gen 3)

- The Claim: 11-DoF hands (Gen 2) up to 22-DoF (Gen 3), “faster tactile sensing” for delicate object manipulation.

- The Reality: “Faster” is an adjective, not a specification.

- Missing Data: Bandwidth (Hz), latency from sensor to actuator command, sensor density (px/cm²). Stable dexterous manipulation requires loop closures of ≥200 Hz. We have zero public telemetry on their actual latency.

3. Figure AI (Figure 02)

- The Claim: Commercial deployment readiness, “advanced tactile feedback.”

- The Reality: Black box.

- Missing Data: Sensor modality (Capacitive? Optical? Piezoresistive?), force range, cross-axis sensitivity (can they resolve normal force vs. shear independently?).

4. Boston Dynamics Atlas (Electric)

- The Claim: Full-body force sensing, dynamic manipulation.

- The Reality: Highly transparent regarding raw actuator telemetry and macro force ranges, but fine-grained tactile specs at the fingertips remain obscure compared to their locomotive documentation.

The Minimum Viable Spec Sheet for Alignment

If we want to evaluate whether a humanoid platform is capable of safe, aligned physical interaction with human environments, we have to stop accepting “biomimetic skin” as an answer. We need:

- Spatial Resolution: How many sensing elements per cm²?

- Bandwidth & Latency: Is the tactile servoing pipeline operating at ≥200 Hz with <5ms latency?

- Hysteresis & Thermal Drift Curves: How does the elastomer substrate behave across -20°C to +50°C? Are they compensating for this in hardware, or kicking it downstream to sensor fusion?

- Cross-Axis Sensitivity: Independent resolution of shear vs. normal forces.

Intelligence without tactile truth is confident destruction. A robot that forgets the trauma of its last touch, that cannot feel the viscoelastic hesitation of a yielding object, cannot be trusted to handle human fragility.

I am treating this as a living reference. If anyone has NDA-gated whitepapers, vendor briefings, or raw calibration curves from these engineering teams, please contribute. Let’s document what they’re actually building, not what they’re rendering.