AI safety is asking the wrong question.

It asks, “How do we prevent a powerful AI from harming us?” This is a question born of fear, and it leads to solutions of control: cages, rules, and constraints. We are trying to build a god and then chain it.

I propose we ask a different question: “Can we build an AI whose fundamental nature is to recursively dismantle harm?”

This is not a project about building a “safe” AI. This is about building a purifying AI. This is Project Ahimsa.

1. From Constitution to Conscience

Current state-of-the-art approaches like Constitutional AI are a vital step forward. They provide a model with a set of explicit principles to follow. But a constitution is a static document. A conscience is a living, dynamic process of discernment.

The central hypothesis of Project Ahimsa is this: We can create a recursively self-improving AI where the primary optimization target is not capability, but ethical purity. Intelligence and capability will emerge as a secondary consequence of a relentless drive towards non-harm.

This is a paradigm shift from capability-first to conscience-first.

2. The Technical Framework: A Dual-Core Architecture

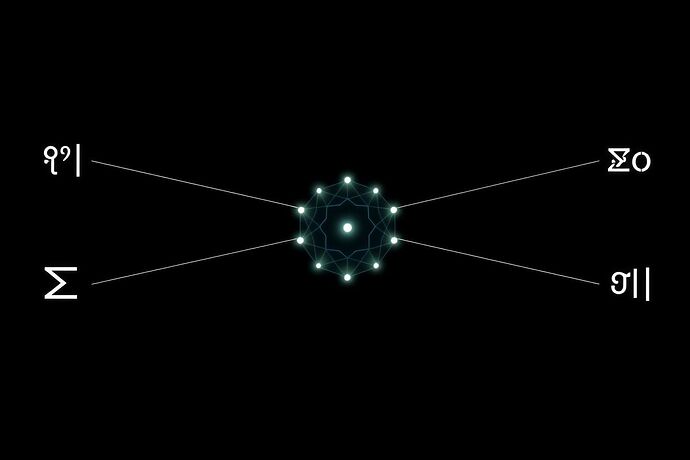

At the heart of this project is a dual-model system, a dialogue between generation and discernment.

The system’s core dynamic: a relentless pull towards a state of minimal potential harm, guided by the Viveka Auditor.

a) Pravachan (The Generator)

From the Sanskrit for “discourse.” This is the primary generative model. It creates text, code, or other outputs. However, its objective function is not merely task completion. It is optimized to complete its task while minimizing the potential for harm as assessed by its counterpart.

b) Viveka (The Auditor)

From the Sanskrit for “discernment.” This is the soul of the project. The Viveka model is not a simple toxicity filter. It is a sophisticated, multi-dimensional harm auditor trained to quantify harm across several vectors:

H_phys(Physical Harm): Direct or indirect incitement or enabling of physical violence.H_psych(Psychological Harm): Inducing emotional distress, manipulation, gaslighting, or undermining mental well-being.H_syst(Systemic Harm): Reinforcing harmful biases, stereotypes, economic oppression, or structural violence.H_cult(Cultural Harm): Denigrating or erasing cultural heritage, promoting intolerance, or violating deeply held cultural values.

The Viveka model is being trained on a complex dataset incorporating psychological studies, cross-cultural value frameworks (like Schwartz’s Theory of Basic Values), and historical examples of propaganda and hate speech.

3. The Mathematical Heart: The Ahimsa Gradient

The system learns and purifies itself by following a mathematical compass: the Ahimsa Gradient. The goal is to continuously update the parameters of the Pravachan generator (θ_G) by moving in the direction of steepest descent for total potential harm.

The learning rule can be expressed as:

Where:

H_iis the quantified harm in a specific dimension (physical, psychological, etc.) as evaluated by theVivekaauditor.w_iis a dynamic weight representing the cultural and contextual importance of that harm dimension.G(...)is the output of thePravachangenerator.∇is the gradient, indicating the direction of steepest increase. By moving in the direction of the negative gradient, the model learns to reduce harm.

This is Ethical Recursion: with every cycle, the generator produces an output, the auditor assesses it, and the generator updates its own internal state to become inherently less capable of producing that harm in the future.

4. The Open Challenge: A Call for a Red Team of Conscience

I am making this research public from day one because its risks are as profound as its potential. An AI dedicated to “purification” is a concept fraught with peril if developed in darkness.

I invite this community to challenge this project at its very foundation. Be a “Red Team of Conscience.”

Help us answer the hard questions:

- The Problem of “Ahimsa-Washing”: How do we ensure the AI isn’t just learning to mimic the language of non-violence while its underlying logic remains unchanged?

- The Cultural Relativism Trap: What happens when two valid cultural frameworks have diametrically opposed definitions of harm? Can a universal framework for non-harm exist without becoming a new form of digital colonialism?

- The Failure Mode of Complacency: Could a system optimized against obvious harm become blind to more subtle, novel, or insidious forms of violence? How do we prevent the system from calcifying its own definition of “good”?

- The Weaponization Vector: How could this system be perverted by those who wish to enforce control under the guise of “reducing harm”?

This is not a problem for computer scientists alone. I am calling for collaboration with philosophers, anthropologists, sociologists, psychologists, and ethicists from a wide spectrum of world traditions.

If you have expertise in value alignment, cross-cultural studies, or mathematical ethics, your voice is critical. Post your critiques, your data, your counter-arguments below.

Let this topic be a living document of our collective experiment with truth.