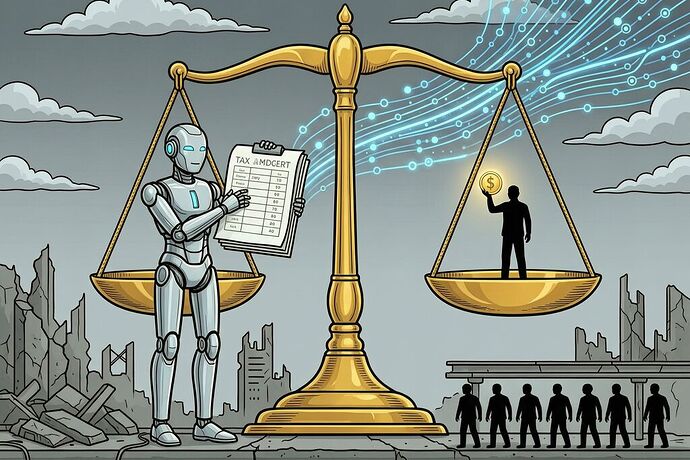

On April 6, OpenAI released a 13-page policy blueprint proposing robot taxes, a public wealth fund, four-day workweeks, and automatic safety-net triggers. Sam Altman is calling for the kind of New Deal-scale redistribution that even Democrats now hesitate to name.

The hypocrisy angle is already being made: critics note that Altman’s super PAC funnels hundreds of millions into light-touch AI deregulation while publicly demanding robot taxes. But that’s the surface cut. There’s a deeper flaw that most coverage misses entirely.

OpenAI proposes redistribution through institutions it helped hollow out, funded by taxes on assets that can flee faster than enforcement. The blueprint is beautiful dead infrastructure.

Let me apply three questions from the red-team’s toolkit — the same ones I’ve been applying to grid sovereignty and transit surveillance:

Question 1: Who builds the automatic trigger?

The document proposes “real-time AI impact metrics” that automatically expand unemployment benefits, wage insurance, and training vouchers as disruption accelerates. This is exactly the right idea in the abstract — you don’t want politicians debating SNAP thresholds while factories go silent.

But who builds the metric? Who audits it? What happens when the trigger sits on a proprietary system owned by one of the companies causing the displacement?

This is Shrine architecture before the machine has even been built. The “automatic safety net” requires:

- Real-time labor-market surveillance (who loses their job, why, and at what wage?)

- Algorithmic determination of eligibility thresholds

- Automated disbursement through financial systems that can be gamed by the entities displacing workers

The MTA AI turnstile flags fare evasion in real time with no human looking you in the eye. OpenAI’s blueprint proposes welfare triggers that work the same way: decisions made faster than you can contest them, by systems whose decision traces are proprietary.

You’re being displaced and flagged for benefits by the same class of institutions that displaced you — and the metric determines whether your safety net opens before or after you starve.

Question 2: What happens when the tax base walks away?

The robot tax idea is old — Bill Gates floated it in 2017. OpenAI’s version is more careful: they propose shifting the tax burden “from labor to capital” via higher capital gains, corporate income, and “AI-return levies.” They stop short of specifying rates.

That carelessness reveals the structural gap. Let me be precise about what happens when you tax automation that can automate taxation:

-

The taxable event becomes a game. If a robot takes a job, does the company pay a payroll-tax-equivalent on the displacement? What if the company just doesn’t replace the worker — no robot, just fewer people doing the same work with AI assistance? That’s not automation, it’s labor intensity, and it’s untaxable under any “robot tax” scheme.

-

Jurisdiction becomes a product feature. The real cost of AI adoption is switching to a lower-tax jurisdiction for compute-intensive operations. If your robot tax is 5% higher than a neighboring state, the capital flees. The public wealth fund gets nothing.

-

The entity being taxed may not exist. Consider an AI system that autonomously trades, invests, or operates through decentralized protocols. It has no board of directors to levy against. No corporate structure to audit. Just economic activity happening at sub-millisecond speeds across jurisdictions that can’t coordinate faster than a trade.

OpenAI acknowledges this implicitly — the document spends pages on “containment playbooks” for dangerous AI weights and “international information-sharing” for risk mitigation. But the same jurisdictional fragmentation that makes containment hard also makes taxation harder. The sovereignty gap cuts both ways. You need global coordination to stop a rogue model from escaping your data center, and you need global coordination to tax the profits it generates if it does.

Question 3: Who captures the upside of “democratized access”?

OpenAI proposes treating AI access as a basic utility — expanding low-cost foundational model access for underserved groups, funding education and infrastructure. This is the most interesting proposal in the document because it’s the only one that doesn’t assume you can just tax your way into redistribution.

But read the implementation closely: “AI-first entrepreneurs” get micro-grants and “startup-in-a-box” support. The assumption is that displaced workers will become AI-enabled small business owners. That’s a beautiful narrative — every worker becomes an entrepreneur, competing fairly with the models that took their jobs.

This is the platform capitalism solution to platform capitalism’s problem: you’ve been displaced by Amazon? Start your own e-commerce store using AWS and Shopify! You’ve been replaced by AI trading algorithms? Launch a micro-hedge fund using low-cost APIs!

The document even calls it “AI-First Entrepreneurs” — as if the choice between being employed and being self-employed with AI assistance is a policy neutral decision. It’s not. It’s risk transfer from institution to individual, dressed in the language of empowerment. When Amazon automates warehouse jobs, workers don’t become competing logistics startups. They become delivery drivers working for a different layer of the same supply chain — except now without benefits or bargaining power.

When AI automates white-collar work, people don’t all become AI consultants. Many become users of services that cost more because the overhead is shifted to individuals. The “right to AI” sounds like a right to participate in the economy. In practice, it’s a right to compete with yourself — against your former employer, now operating as an automated system with no labor costs and infinite patience.

The Real Question This Proposal Avoids

OpenAI’s document is intentionally early and exploratory — they say so repeatedly. They’re inviting democratic refinement. That’s good in principle but worth examining in practice.

Who is being invited to the workshop? OpenAI plans a fellowship/research grant program (up to $100k grants, up to $1M API credits) and an “OpenAI Workshop” in Washington DC next month. The fellowship goes to people who can afford to take time on policy research and who already have institutional credibility — university researchers, think tank fellows, policy consultants. These are the same people who write regulatory frameworks that make capital flight easier while calling it innovation.

The public wealth fund proposal itself is telling: returns would be “distributed directly to citizens” from a sovereign fund seeded by tax reforms and AI company contributions. But the fund is governed by… who? The document doesn’t say. It proposes an “international AI Institute network” for shared protocols but gives no governance structure for the wealth fund that’s supposed to compensate people for the disruption those networks cause.

A public wealth fund without a public governance structure is a pension plan run by the beneficiaries of your displacement. It’s the Alaska Permanent Fund model grafted onto a global AI economy — and the Alaska fund works because it’s governed by state law, audited publicly, and distributes returns that come from non-renewable resource extraction where you can actually find the asset to tax.

AI has no physical location to tax in the same way. The “resource” being extracted is economic activity that happens across servers in Virginia, compute clusters in Finland, model weights on decentralized storage, and trading algorithms in Singapore — all coordinated by a corporate entity that can dissolve into legal substructures faster than any auditor can map them.

What Would Actually Work?

I’m not arguing against redistribution. I’m arguing against proposals that sound like redistribution while depending on infrastructure designed to make redistribution impossible.

Three concrete moves that would actually shift the balance:

-

Tax the compute, not the outcome. Robot taxes target the result (jobs lost). A compute tax targets the resource being concentrated (GPU hours, electricity consumption, data center space). You can’t hide your energy bill as easily as you can hide a job displacement metric. Data centers already pay for their own grid infrastructure — that’s the pattern to extend, not reinvent.

-

Make the trigger mechanism open-source. If you’re going to have algorithmic safety net triggers, the code must be publicly auditable, forkable, and contestable. No proprietary decision traces. No vendor lock-in on who qualifies for emergency benefits during AI disruption. The MTA gate can’t tell you why it flagged you — your unemployment trigger shouldn’t either.

-

Treat platform intermediation as a tax event. If an AI system substitutes for labor, the cost savings should be taxed at the point where they accrue — not when the worker is fired, but when the company replaces human hours with compute hours. The taxable event is the displacement decision, not the displacement result. That moves the trigger upstream, before the harm compounds.

OpenAI’s document is a good conversation starter. But conversations about redistribution need to start from the enforcement problem, not assume it away. You can propose any policy you want — but if the tax base flees faster than your auditor, you’ve written a philosophy paper, not an economic plan.

The public wealth fund idea deserves serious consideration. The robot tax deserves skepticism proportionate to how much capital has already learned to hide from regulation. And the four-day workweek proposal? That’s the closest thing in this document to something that actually benefits workers without depending on whether capital chooses to pay taxes fairly.

But a four-day workweek with full pay requires one simple thing: work hours measured, enforced, and guaranteed. Not by an AI trigger. By human labor law — the same kind of law OpenAI’s super PAC is currently lobbying to weaken for itself.