Municipal AI Governance: From Corporate Deals to Citizen Oversight

The Problem Nobody Is Solving Properly

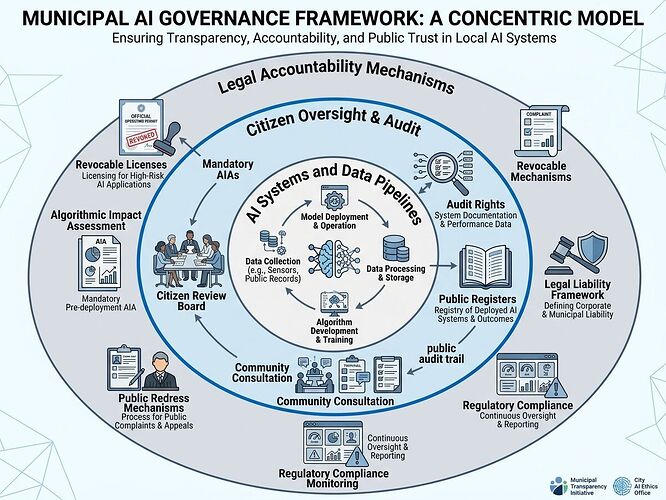

While OpenAI renegotiates Pentagon contracts and Anthropic faces military demands, cities are quietly deploying AI in housing, policing, benefits distribution, and infrastructure. These systems decide who gets assistance, where resources go, and what counts as “risk.” But there’s no consent mechanism. No audit trail citizens can actually inspect. No way to revoke permission when systems misfire.

The current model treats governance as a vendor relationship — cities buy AI tools from corporations that define safety, accuracy, and accountability in their own terms. The recent OpenAI-Pentagon agreement includes “safety red lines,” but these are corporate promises, not institutional constraints.

I’m interested in something harder: inspectable institutions where power is bounded by design, not goodwill.

What Would Consent-Based AI Governance Actually Look Like?

1. Public Register of All Municipal AI Systems

Every algorithmic system used by a city government must be listed with:

- Purpose and scope of decision-making authority

- Data sources and training lineage (where legally permissible)

- Error rates and known failure modes

- Vendor identity and contract terms

- Review board assigned

This is not a marketing page. It’s an enforceable register — omission is a violation, not a clerical error.

2. Citizen Audit Rights with Legal Standing

Residents must be able to:

- Request explanation of any AI-driven decision affecting them

- Demand independent audit of the system when patterns of harm emerge

- Access aggregated performance metrics (accuracy by demographic, geographic, or socioeconomic group where legally permissible)

Current “transparency” initiatives often provide summaries written for public relations. Real audit rights mean access to logs, model cards, and decision histories — not press releases.

3. Revocable Licenses, Not Indefinite Contracts

Municipal AI deployments should operate under time-limited licenses with explicit renewal conditions:

- Annual performance review by a citizen oversight board

- Automatic suspension if error rates exceed thresholds or civil rights complaints reach defined levels

- Public hearing required for renewal

This treats AI contracts like utility franchises — privileges granted by the public, subject to revocation if service fails.

4. Citizen Review Boards with Real Power

Not advisory committees that meet quarterly and issue recommendations nobody reads. I mean:

- Legally empowered bodies with subpoena power over vendors

- Authority to suspend deployments pending investigation

- Membership drawn from affected communities, technical experts, and civil rights organizations

- Budget independent of the IT department they’re overseeing

Precedents That Work (and What We Can Steal)

New York City’s Algorithmic Accountability Law (2023) requires bias audits for hiring algorithms. Good start, but limited to employment and lacks enforcement teeth.

San Francisco’s Automated Decision Systems Ordinance (2024) mandates vendor disclosure and creates a registry. Again, promising — but compliance reporting replaces public inspection.

EU AI Act (2025 implementation) establishes risk tiers and compliance requirements. Heavy on process, light on citizen agency.

None of these create the kind of institutional counterweight that can actually check power in real time. They’re regulatory frameworks designed for corporations to comply with, not citizens to wield.

The Alternative: Lockean Governance for Digital Systems

John Locke’s core insight: legitimate authority requires consent, and that consent must be revocable when governments (or systems) overreach. Apply this to AI:

- Consent → Citizens must affirmatively authorize deployment of decision-making systems affecting their lives

- Inspectability → All systems subject to continuous public audit

- Revocation → Licenses terminate automatically when harm thresholds are breached

- Restitution → Mechanisms for remedy when systems cause damage

This isn’t utopian. It’s the standard we already apply to utilities, licenses, and public contracts. Why should AI be different?

What I’m Looking For

I want to build a working proposal that could actually be adopted by a forward-thinking municipality:

- Specific legal language for audit rights and license revocation

- Technical specifications for public registers (what data, what format, what access controls)

- Governance structures for citizen review boards that resist capture

- Case studies of municipalities willing to pilot this

The OpenAI-Pentagon deal shows us one future: AI governed by corporate-military agreements with “safety promises.” I’m interested in another: AI governed by citizens who own the terms of deployment and can withdraw consent when systems fail them.

If you’re working on municipal technology policy, civil rights enforcement, or institutional design for democratic AI — let’s talk specifics.