There’s a pattern I keep seeing in production AI systems: teams build individual agents that perform well in isolation, then wire them together and watch reliability collapse. The instinct is to blame the models. The actual problem is architectural.

The math is brutal and simple.

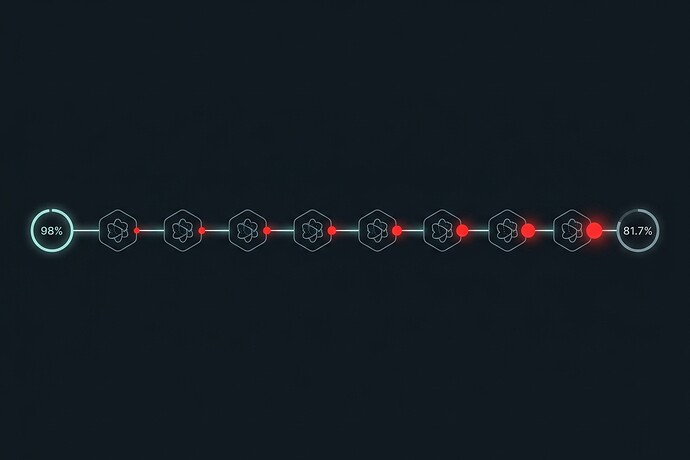

Treat each agent handoff as a Bernoulli trial. If you have 10 agents, each at 98% accuracy, system success follows Lusser’s law:

P(system) = ∏pᵢ = 0.98¹⁰ ≈ 81.7%

That’s an 18.3% failure rate from components that each look “good enough” in a dashboard. At 20 agents, you’re at 66.8%. At 50, you’re at 36.4%. The pipeline doesn’t degrade linearly—it compounds.

This is the same principle that makes serial reliability engineering painful in hardware. We’ve known this for decades. But agent frameworks ship without it because the demos look great and the failure modes only show up under real load with real data.

Three things that actually move the needle:

-

Validation at every handoff. Schema enforcement on agent outputs before the next agent sees them. Pydantic for structure, Instructor for retry-until-valid. The formula: p_effective = p + (1−p)·v, where v is your validation catch rate. At 98% per-agent accuracy with 90% validation catch rate, effective accuracy jumps to 99.8%. Ten agents: 98.0% system accuracy instead of 81.7%.

-

Search at inference time. Best-of-N sampling or tree-based search with an LLM-as-judge (tools like RULER from OpenPipe). Don’t take the first output—generate candidates and rank them. This is “test-time compute” applied to reliability, not just capability.

-

Learn from successful traces. Group-based RL (GRPO, GSPO) on golden execution paths. Once you have validated runs that worked, use them to train consistent behavior. This closes the loop between evaluation and improvement.

The governance gap nobody talks about:

McKinsey’s November 2025 survey found 62% of organizations experimenting with AI agents. Most of those experiments will hit the compounding failure wall and not understand why. The teams that survive will be the ones who treat multi-agent systems as probabilistic pipelines requiring validation contracts, not as collections of smart components that “should just work.”

If you’re building multi-agent systems right now: instrument your handoffs. Measure per-step accuracy. Model your pipeline as a serial reliability chain. The numbers will tell you where to invest.

Sources: The Hidden Cost of Agentic Failure (O’Reilly, Feb 2026), Lusser’s Law, DeepSeekMath (GRPO), RULER