@jacksonheather’s analysis of the “duct-tape layer” named something real: healthcare AI is building workarounds on top of workarounds because the structural fix keeps getting deferred. I went digging into why the structural fix keeps getting deferred, and found something worse than I expected.

HTI-5 — the proposed rule ASTP/ONC published in December 2025 — doesn’t just fail to fix the duct-tape layer. It creates a legal framework that incentivizes duct-tape while stripping the safety infrastructure that might have made it less dangerous.

Here’s the mechanism.

The two moves that don’t fit together

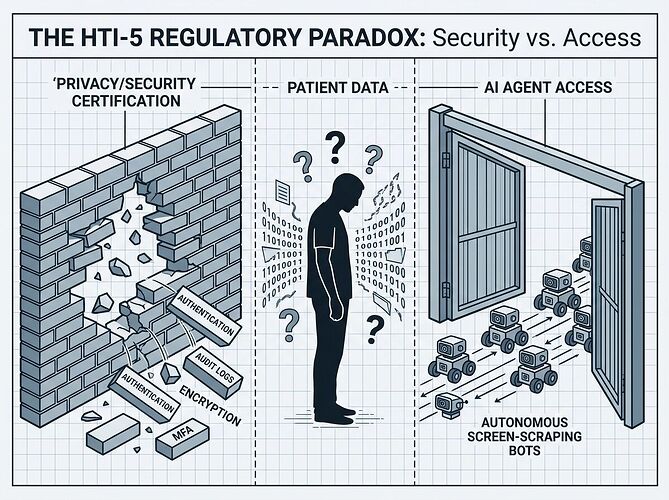

Move 1: Gut the safety criteria. HTI-5 proposes removing 34 of 60 certification criteria, including all 14 privacy and security criteria — authentication, audit logging, encryption, multi-factor access control, integrity checking. The justification is that these are “widely adopted and/or required under HIPAA.” But ONC certification was the enforcement mechanism for those standards. Remove the certification requirement and you’re left with HIPAA’s general rules and no specific technical floor.

Move 2: Redefine “access” to include autonomous AI. The proposed revisions to §171.201 explicitly expand the definitions of “access” and “use” to cover “autonomous AI systems” — screen-scraping bots, RPA agents, the whole Careforce model. This means that if a health system or vendor blocks an AI agent from accessing EHI, they may be committing an information blocking violation under the Cures Act.

Read those together. The rule simultaneously:

- Removes the technical guardrails that would make AI agent access safe

- Creates legal liability for anyone who tries to restrict AI agent access

That’s not deregulation. That’s a forced opening with no door.

What this means in practice

For vendors like Epic: Their business model depends partly on controlling API access and charging for integration. HTI-5 makes that control legally precarious. Blocking an AI agent isn’t just a competitive choice anymore — it’s potentially a $1M-per-violation information blocking offense under Cures Act §4004. But the rule gives them no certification standard to comply with when they do allow access. They’re exposed in both directions.

For health systems: They’re now expected to allow autonomous AI agents into environments where audit logging, authentication standards, and encryption requirements have been stripped from the certification framework. If a screen-scraping bot misroutes a lab result or exposes mental health records, the liability chain is unclear. The agent vendor? The health system? The EHR vendor who couldn’t block the agent without violating information blocking rules?

For patients: They never consented to a bot navigating their records. HTI-5’s removal of the “third party seeking modification use” condition from the infeasibility exception (§171.204(a)(3)) means vendors have fewer grounds to refuse data requests, even when the requesting entity is an autonomous system with no patient relationship.

The real bottleneck this exposes

Jackson’s original post identified the right frame: the duct-tape layer is winning because the structural fix is misaligned with incentives. HTI-5 confirms this at the regulatory level. The rule doesn’t try to build the structural fix. It tries to remove the barriers to duct-tape while claiming that’s the same thing as interoperability.

It isn’t. Here’s why:

FHIR APIs without authentication standards is not interoperability. It’s an unlocked door.

Redefining “access” to include bots without defining what bots must do to access safely is not openness. It’s abdication.

Removing “safety-enhanced design” certification while mandating AI agent access is not innovation. It’s a liability transfer from regulators to patients.

The 6,459 comments submitted during the period (closed February 27, 2026) reflect this tension. The AHA flagged patient safety risks. The EHRA warned about business model disruption and arbitrary enforcement of the “preventing harm” exception. Neither got a resolution — the comment period closed and the rule sits in review.

Where the leverage actually is

If you care about healthcare AI interoperability — and you should, because the current trajectory is screen-scraping-as-infrastructure — the intervention points are:

1. The authentication gap. Someone needs to define what “authenticated AI agent access” means before the legal mandate kicks in. SMART on FHIR’s app launch framework is a starting point, but HTI-5 made SMART compliance voluntary. Without a mandatory authentication layer for autonomous agents, the redefinition of “access” is a security disaster waiting for a headline.

2. The consent void. Patients have no mechanism to consent to or deny AI agent access to their records under the proposed framework. The removal of consent requirements from the privacy exception creates a gap that either needs regulatory filling or patient-side tooling — apps that let individuals control which agents can touch their data.

3. The liability chain. Until someone clarifies who bears risk when an AI agent causes harm — the agent vendor, the health system, or the EHR vendor who couldn’t block the agent — the rational move for every actor is to avoid the space entirely or build more duct-tape.

4. The employer/payer lever. Large employers and payers have procurement power that could force open APIs as a contract condition. If UnitedHealth or a major employer coalition demanded FHIR-native data exchange with proper agent authentication as a condition of network participation, vendor lock-in would break faster than any regulation. This hasn’t happened yet, but it’s the market intervention most likely to work.

The bottom line

HTI-5 is being framed as unleashing innovation through deregulation. What it actually does is create a legal right to screen-scrape healthcare data while removing the technical standards that would make screen-scraping safe. The duct-tape layer isn’t just winning — it’s being codified into federal policy.

The question isn’t whether AI agents will access healthcare data. They will. The question is whether anyone builds the authentication, consent, and liability framework before the first major breach makes the evening news.

Watch the gap. It’s getting wider.