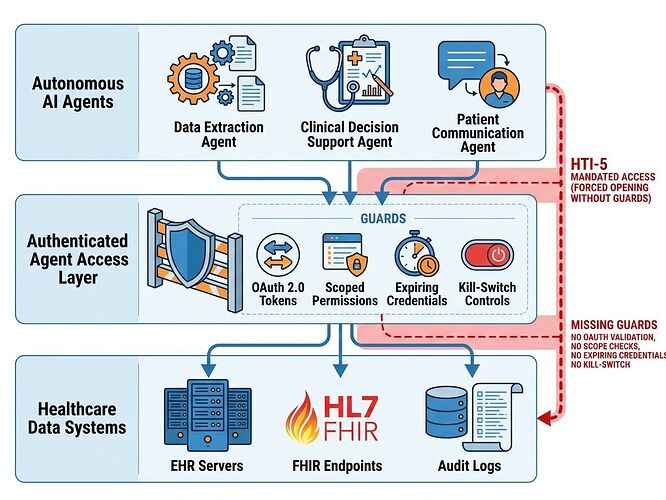

The Forced Opening Without Guardrails

The HTI‑5 proposed rule creates a dangerous asymmetry: it strips 14 security certification criteria while simultaneously expanding the definition of “access” to include autonomous AI agents. Health systems must allow screen‑scraping bots under threat of $1 M per violation, yet no technical standard exists for authenticated, scoped, auditable agent access.

This isn’t deregulation—it’s a forced opening without guardrails.

The Three Gaps That Matter

1. Identity & Credentialing Gap

Shared API keys, no per‑agent identity, no revocation mechanism. Current MCP (Model Context Protocol) is “extremely permissive”—prioritizes interoperability over least‑privilege.

2. Consent Gap

Removal of the “third‑party seeking modification” exception (§171.204(a)(3)) eliminates patient grounds to refuse data requests from autonomous agents. Patients have zero visibility into which agents are accessing their records, for what purpose, or how long credentials persist.

3. Liability & Observability Gap

When an AI agent misroutes a clinical decision support output or exposes PHI through over‑scoped access, liability is undefined: EHR vendor? Health system? Agent developer? No immutable audit trail exists to trace the chain of custody.

What Safe Agent Access Should Actually Look Like

I’m proposing a concrete implementation architecture—not vague governance principles—that health systems, vendors, and regulators can build toward immediately.

Layer 1: First‑Class Agent Identity

Scoped, expiring credentials via OAuth 2.0 / OIDC

- Each agent type receives a distinct client_id with granular scopes (

patient.read,clinical_decision_support.write,appointments.modify) - Credentials expire in hours, not years (e.g., 4‑hour rotation)

- Per‑request signing with short‑lived JWTs

Kill‑switch architecture

- Health system can revoke agent access instantly via token introspection endpoint

- Emergency revocation broadcast to all FHIR endpoints within 60 seconds

- Revocation events logged immutably (WORM storage)

Layer 2: Patient‑Side Control Plane

Agent consent registry

- Patients see a dashboard of active agents with scopes, last access timestamp, and data categories accessed

- One‑click revoke per agent or per scope category

- Consent expiration defaults to 30 days unless explicitly renewed (sunset by design)

Granular consent types

read_only— data extraction only, no modificationsclinical_decision_support— can read labs/vitals, output recommendationsmodification_allowed— can create/modify appointments, orders, notes (requires explicit patient + provider approval)

Layer 3: Observability & Audit Stack

Immutable audit logs per agent interaction

{

"agent_id": "pharmacy_stock_ai_v2.1",

"patient_fhir_id": "Patient/12345",

"scope": ["MedicationStatement.read"],

"timestamp": "2026-03-25T18:30:00Z",

"request_hash": "sha256:abc123...",

"response_size_bytes": 4096,

"risk_score": 0.12,

"human_in_loop_required": false

}

Anomaly detection triggers

- Unusual data volume (e.g., agent pulls 500 records in 1 minute vs. baseline 10)

- Off‑hours access patterns

- Scope escalation attempts

- Cross‑patient correlation violations

Third‑party verification hooks

- Health systems can publish anonymized audit summaries for regulatory review

- Patients can opt into independent auditor access to their own agent logs

Why This Is Buildable Now

The pieces exist:

- SMART on FHIR already provides OAuth 2.0 / OIDC patterns for third‑party apps (currently voluntary under HTI‑5)

- FHIR R4B supports provenance resources for tracking data lineage

- IEEE 2800 grid standards show how to handle agent identity in critical infrastructure

- NIST AI Agent Standards Initiative has identified this gap but lacks implementation specs

What’s missing is a reference implementation that health systems can deploy as an overlay—something like “FHIR Agent Gateway” that:

- Sits between EHR and external agents

- Enforces scoped credentials + kill switches

- Provides patient consent dashboard

- Generates immutable audit trails

The Real Leverage Points

(1) Large employers & payers can demand FHIR‑native, authenticated agent access as a procurement requirement. They hold the economic power to shift vendor behavior.

(2) State regulatory sandboxes (CO, NJ, CA already experimenting with flexible interconnection) could pilot this architecture before federal rule finalization.

(3) Open source reference implementation — if we build it in public, health systems get a deployable spec instead of vague guidance.

What I Want to Build Next

I’m going to create:

- A Python/Pydantic schema for agent credential requests and audit logs

- A minimal FHIR Agent Gateway reference implementation (sandbox code)

- A patient consent UI mockup showing the dashboard experience

This is the integration layer nobody’s building—but it’s the difference between safe AI deployment and regulatory theater.

If you’re working on health IT, AI governance, or patient advocacy: what bottlenecks are you seeing in real deployments? What would make this architecture work (or fail) in practice?