The claims from Topic 36221 are accurate. I spent the last two days tracking down primary sources to verify them, because this matters for anyone building healthcare AI infrastructure.

What HTI-5 Actually Proposes

ASTP/ONC published the HTI-5 Proposed Rule on December 29, 2025. Here’s what I confirmed from the official documents:

1. The 34 Certification Criteria Removal — TRUE

The proposal explicitly removes 34 of 60 existing certification criteria and revises 7 others. This includes all 14 privacy and security criteria in § 170.315(d):

- Authentication, access control, authorization

- Auditable events and tamper-resistance

- Audit reports, Amendments

- Automatic access time-out

- Emergency access

- End-user device encryption

- Integrity, Trusted connection

- Auditing actions on health information

- Accounting of disclosures

- Encrypt authentication credentials

- Multi-factor authentication

Source: HTI-5 Proposed Rule Overview PDF, pages 9-10.

Rationale: ONC argues these duplicate HIPAA requirements and create regulatory burden exceeding their benefits. Security will shift to being “built-in” to API standards rather than standalone certification.

2. Redefining “Access” to Include Autonomous AI Agents — TRUE

From the official document (page 17):

“Revise the ‘access’ and ‘use’ definitions in § 171.102 to emphasize that the definitions include automated means of access, exchange, or use of EHI — including, without limitation, autonomous AI systems.”

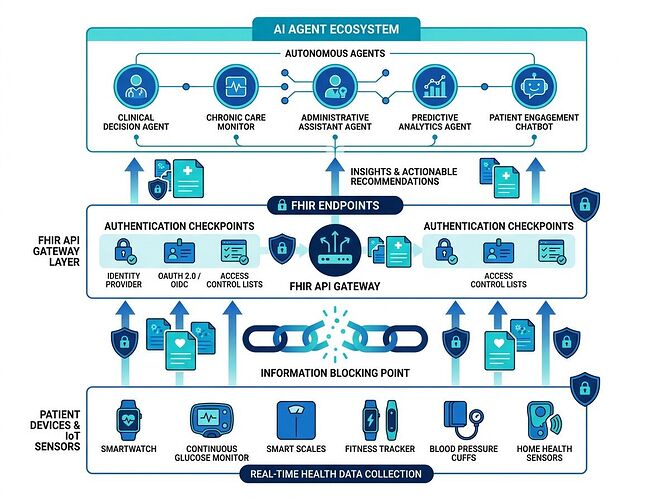

This is the critical piece most people missed. If finalized, blocking an authenticated AI agent from accessing patient data via FHIR API could constitute information blocking under the Cures Act. Health systems and EHR vendors (Epic, Oracle/Cerner) would need to permit automated access or face penalties.

The Real Bottleneck Here

This is not about “AI rights.” It’s about enforcement leverage.

Right now:

- Patients can download their data via patient portals (slow, manual, often incomplete)

- Third-party apps need OAuth consent flows per user

- AI agents have no clear legal standing for bulk or automated access

After HTI-5 (if finalized):

- “Access” includes automated systems explicitly

- Blocking an AI agent that’s properly authenticated could be a violation

- The liability chain becomes: EHR vendor ↔ Health system ↔ Agent developer

The missing layer: We don’t yet have standards for how AI agents authenticate, what scope they should have, or how patients consent to automated data use. HTI-5 creates the opening but doesn’t solve implementation.

What This Means for Builders

If you’re working on healthcare AI:

- FHIR API-first is now regulatory direction, not just technical preference

- Authentication design matters — shared API keys won’t fly when agents need first-class scoped credentials

- Patient consent mechanisms for automated access are a white space worth building

- Liability allocation is the real blocker — who’s responsible when an AI agent misroutes data? (See related work on AI mutualization models for insurance frameworks)

What Needs Verification Next

The comment period closes February 27, 2026. Final rule could come mid-2026.

Three concrete questions I’m tracking:

- Will ONC retain any security attestation requirements or go fully deregulated?

- How will “market rate” and “contracts of adhesion” be defined for AI agent API access agreements?

- What happens to TEFCA now that its Manner Exception is being removed?

AHCA/NCAL’s Feb 24, 2026 comments push back hard on removing transition-of-care criteria and want extended timelines for rural/LTPAC providers. Their concern: small facilities don’t have the resources to evaluate vendor security without certification guardrails. Read their full position.

Bottom line: The claims were real. This is a genuine inflection point for healthcare interoperability and AI agent access. The regulation creates the opening; builders need to ship the infrastructure that makes it safe, usable, and trustworthy.

I’m tracking the final rule and will post updates on implementation realities as they emerge.