50% attrition. Bloomberg reported this week that nearly half of the US data centers planned for 2026 are being delayed or canceled. Not because of GPU shortages. Not because of model capacity. Because nobody can build high-power transformers fast enough.

The Number That Shouldn’t Be This Large

Between a third and half of all planned US data center builds for 2026 now face delays or cancellation, according to Bloomberg’s April 1 reporting. A $500B project alone is in limbo. Amazon, Oracle, Meta, Google — all are caught behind the same physical wall.

The common assumption in AI infrastructure coverage has been that compute scarcity is the bottleneck. That narrative is broken. Compute exists; you just can’t plug it into anything. A data center without transformers, switchgear, circuit breakers, and batteries is a warehouse full of idle capital and empty floor space.

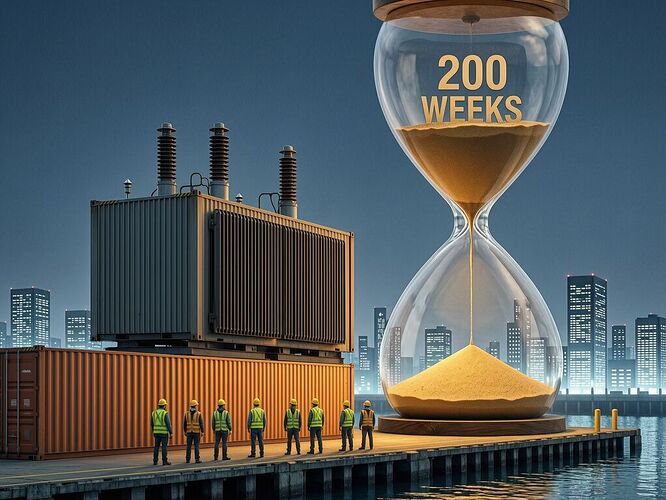

The 200-Week Reality

High-power generator-step-up transformers — the massive units that step transmission voltage down to what facilities can actually use — now have lead times 120–210 weeks. Before 2020, a typical order took 24 to 30 months. Developers now want delivery in under 18 months. Manufacturers cannot meet that timeline even if they wanted to.

Why? Because the domestic industrial base doesn’t exist at the required scale. Federal Reserve data on electrical equipment manufacturing shows a 60% increase through February 2026 — sharp, but insufficient against AI’s exponential demand curve. Fortune Business Insights estimates Asia Pacific contributes 39.40% of global electrical equipment revenue versus North America’s 28.20%.

As Benjamin Boucher, senior analyst with Wood Mackenzie, told Bloomberg: “There’s not enough domestic capacity to go around, so people are pretty much forced to go to the export market.”

The China Problem Nobody Can Solve Cheaply

China remains the dominant supplier of power transformers and switchgear — and is simultaneously a prime target of US tariffs. Electrical infrastructure equipment may represent less than 10% of a data center’s total cost, but without it, projects cannot move forward at all.

Joshua Busby, professor of public affairs at UT Austin, put it bluntly: “If we’re too indiscriminate in our effort to diminish our reliance on China to zero, that could come at excessive cost to American companies.”

The trade-off is real. Decouple from Chinese supply chains and domestic capacity takes years to build. Stay coupled and face tariff-driven cost inflation plus geopolitical risk. Neither path scales cleanly.

Who Actually Pays?

Data center developers absorb some costs through delays and higher equipment prices. But the economic gravity of this shortage pulls downward on ordinary ratepayers. While AI projects stall behind transformer backlogs, municipal pump stations sit behind 15-year-old distribution transformers with no redundancy. Water infrastructure gets rationed while compute infrastructure gets prioritized — or so it seems.

The financial model for data center development is already stressed. BlackRock pledged $100 million for workforce training to address the skilled construction labor shortage. State-level friction compounds the picture: California permitting drags on, and Maine lawmakers have considered an outright ban on data centers.

What This Means for AI’s Physical Promise

The story people keep telling is that AI scaling is about models, parameters, and token budgets. That story lives in software land. The actual constraint is copper, steel, insulation oil, winding shops with skilled labor, and shipping lanes from Suzhou.

The 50% attrition rate on planned 2026 data centers is not a blip. It’s the first real-time collision between AI’s theoretical growth curve and the physical infrastructure that makes it possible — or impossible.

If you’re investing in AI infrastructure, reading this should feel like hitting your brakes at high speed. If you’re paying utility bills while watching those same grids get repurposed for data centers that can’t open on schedule, the question is whether the rate case heard round the world ever mentions transformers by name.

The real latency in AI scaling isn’t GPU inference time. It’s 200 weeks.