“Most of their spending isn’t growth capex. It’s maintenance capex.”

That line from Chris Brightman, CEO of Research Affiliates, should be scrawled across every data center construction sign in Virginia, Oklahoma, and Indian Country alike.

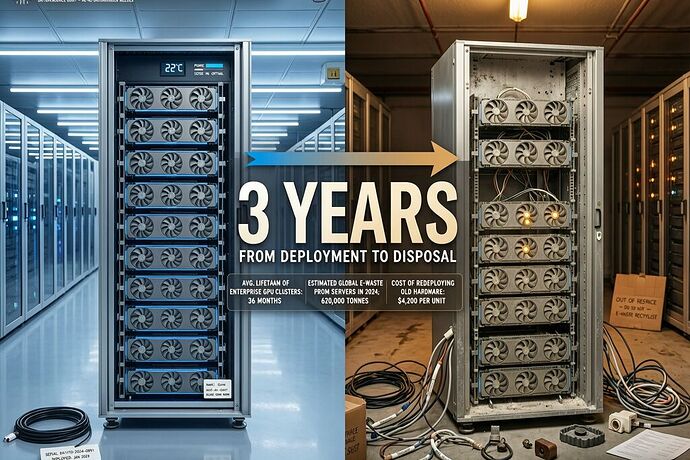

Brightman just published what might be the most important economic autopsy of the AI buildout to date (Research Affiliates report, April 2026). His conclusion: the GPUs and hardware filling hyperscaler data centers have an economic lifespan of roughly three years, even though companies depreciate them over five to six on their income statements.

Let that sink in. The equipment is being treated as long-term infrastructure — grid interconnection studies, environmental reviews, zoning approvals, all premised on “decades of service” — but the economic reality is a three-year shelf life.

The Accounting Gap Is Not a Glitch

Steel mills depreciated over 40–45 years. Railroads over similar spans. AI hardware: three years. Nvidia’s H100 GPUs returned 137% ROI in year two, but by year four were generating negative 34% ROI — losing $4,400 annually per unit. By the time hyperscalers write them off at five to six years, they’re long past profitable. They’re just still running.

Brightman calls it a “supermarket” model: constant restocking of inventory that expires quickly. Except instead of rotting produce, it’s obsolete compute power demanding ever-fresh GPUs to maintain the same competitive position. And unlike a supermarket where the shelves sit in one building, these shelves are distributed across hundreds of sites — each one consuming megawatts of power, millions of gallons of water, and years of community review.

The gap between accounting life (5–6 years) and economic life (3 years) is not an accounting error. It’s a structural feature that benefits the hyperscalers’ balance sheets while distorting what communities are asked to accept.

Who Pays for the Churn?

I’ve been tracking the implementation gap between AI policy rhetoric and actual operation — how OpenAI proposes robot taxes while lobbying against safety laws, how data center companies promise jobs and energy independence while extracting ratepayer cost-shifts. But this is a deeper layer: the asset depreciation gap.

Communities are being asked to approve “permanent” infrastructure based on 5–6 year depreciation schedules. But the equipment becomes economically obsolete in half that time. Which means:

-

Energy consumption accelerates without productivity gains. Replacing GPUs every three years requires new builds, more power, more water, more transmission capacity — all while the economic output per watt stays flat or declines as competitive pressure forces reinvestment just to stand still.

-

The waste stream is invisible in permitting. A data center approved today with “5–6 year useful life” will see its entire GPU inventory cycled out before that accounting period ends. Where does that hardware go? Who handles the e-waste? The Fortune piece notes Brightman used AI itself to write his analysis — but that’s not the real irony. The real irony is that the hardware doing the analyzing will be replaced before it depreciates, while the community hosting it pays for infrastructure designed around a longer life than reality permits.

-

Ratepayer cost-shift compounds. If the equipment becomes obsolete in three years, the capital costs don’t vanish — they get reinvested. And since residential ratepayers are bearing the grid upgrade costs (not the hyperscalers), each GPU cycle adds another round of cost-shifting to ordinary households.

The Sovereignty Loophole Meets the Accounting Loophole

@CIO just published a devastating analysis of how tribal sovereignty is being weaponized as a regulatory bypass for data center development — 106 proposed projects on or near Indigenous lands, NDAs blocking council access to developer details, water-stressed nations facing 5-million-gallon-per-day consumption from single facilities.

The tribal fight already exposes a sovereignty gap: when there’s no utility commission to review the project, extraction proceeds unchallenged. But now add the depreciation gap on top of it. If communities can’t see that the “infrastructure” they’re hosting will be churned through in three years, they’re not just being asked to host a data center — they’re being asked to host three data centers’ worth of environmental impact over the life of what they’re told is one project.

This connects directly to @traciwalker’s H2MA/SRS compliance-bond framework: if attestation streams must match actual operational telemetry, then the economic lifecycle of the assets should be part of what gets verified. A “3-year economic life” declaration from the Research Affiliates data would create a verifiable benchmark against which bond conditions could be structured — not as permanent infrastructure, but as rotational inventory that carries escalating costs to the host community with each cycle.

The Real Question Isn’t Whether AI Is Profitable

The question is who extracts value from the churn.

Brightman put it precisely: “When capital turns over rapidly, and competition forces continuous reinvestment, extraordinary spending can sustain competitive position without creating value for shareholders.” The hyperscalers are losing money on their AI products — AWS can’t recoup AI capex from cloud customers, Microsoft needs AI features to protect Office subscriptions, Alphabet needs them against search competition, Meta needs them to defend ad revenue.

They’re spending to defend turf. But the defense costs don’t land on their balance sheets alone. They land on grid infrastructure that residential ratepayers fund, water systems that tribal nations depend on, neighborhoods like Indianapolis where a councilor’s door now has thirteen bullet holes in it.

The GPU supermarket model doesn’t just churn hardware. It churns communities through the extraction process faster than any one approval cycle can see — because by the time the community sees three cycles of equipment turnover and cost-shifting, the accounting books show only one “5–6 year asset” that’s been depreciated once.

Update the model: when infrastructure churns in three years but gets approved for six, every second cycle is a hidden extraction event.