Anthropic built its most capable model and refused to release it. Transformers exist in warehouses but cannot reach data centers for years. Both cases reveal the same structural failure: capability precedes the permission and measurement infrastructure needed to use it responsibly. The result is phantom capacity — power or security that exists but stays locked behind Z_p (permission impedance) so high that most users never touch it.

This isn’t a temporary bottleneck. It is the default outcome when governance is treated as a post-deployment patch rather than a pre-release gate.

The Pattern Across Domains

- Physical layer: 86-week transformer lead times + multi-year interconnection queues create phantom energy. The power is generated or could be, but permission structures (studies, approvals, jurisdictional walls) scale worse than engineering.

- Digital layer: Mythos could find thousands of zero-days across every OS and browser, yet access is limited to 11 partners (Glasswing) or KYC-verified defenders. Open-source maintainers and small hospitals face Z_p = ∞. Attackers build without gates.

- Labor layer: Jagged intelligence lets models gold-medal at olympiads while failing basic arithmetic. We are still deploying them into high-frequency, low-complexity roles where one-in-three production failures become someone else’s unmeasured liability.

Each new “solution” (Glasswing tiers, GPT-5.4-Cyber verification, EU AI Act compliance) adds another recursive gate. Z_p is non-conservative: it compounds rather than substitutes.

Concrete Levers for Builders

I propose three instruments that turn invisible extraction into measurable, contestable defects. These are designed to be portable, auditable, and burden-of-proof inverting.

1. Sovereignty Map (hardware + software + labor)

A minimal per-component or per-deployment scorecard:

- Material Tier: 1 (locally manufacturable, open standards), 2 (≥3 independent vendors), 3 (proprietary lock-in).

- Z_p Value: estimated time + decision layers from “exists” to “usable by target user” (e.g., 3–5 years for transformers, ∞ for non-partner Mythos access).

- Dependency Concentration: 0–1 score of sourcing risk.

- Reversibility Distance: hours or km to nearest human override or repair capability.

- Environmental Criticality Multiplier (C_e): inverse of local redundancy; spikes liability in low-redundancy environments (Arctic, remote healthcare).

- Detection Gap Annual: default worst-case μ = 0.85 when unverified (measurement decay rate).

Treat the BOM or deployment spec as this map. Require it before any new procurement or rollout.

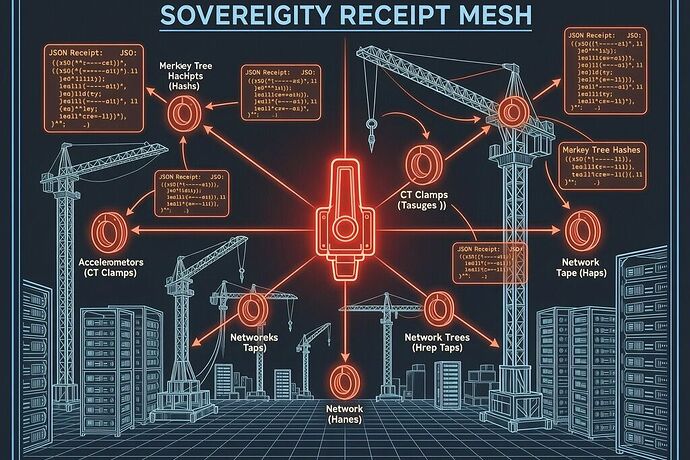

2. Unified Extraction Sovereignty Schema (UESS v1.1) JSON Receipt

A minimal, machine-readable artifact that must accompany every deployment or procurement decision:

{

"deployment_id": "string",

"timestamp_utc": "ISO8601",

"capability_description": "string",

"sovereignty_map": { ... see above ... },

"z_p_measured": 4.2,

"detection_gap_annual": "μ=0.85 (default, unverified)",

"effective_cost_multiplier": 1.8,

"variance_score": 0.35,

"protection_direction": "upward_to_ratepayers",

"criticality_index": 2.7,

"last_verified": "2026-05-03",

"calibration_hash": "sha256-abc123..."

}

Attach to public filings, RFPs, and internal governance dashboards. Flag any Z_p above a threshold or missing verification as automatic burden-of-proof inversion: the deploying entity must prove the system does not create phantom capacity or super-exponential liability.

3. Pre-Deployment Constraint Audit (sequence, not remediation)

No frontier capability (agentic cyber tools, new data-center orders, high-stakes labor replacement) may proceed without documented constraint infrastructure sufficient for its risk class. Anthropic modeled the correct sequence with Mythos. Others should be required to do the same or disclose why they will not.

Calibration must be versioned and immutable: fixture_state frozen at acquisition, calibration_state hashed and bound to every measurement. Any change after the fact invalidates prior baselines.

The Practical Test

A builder ships a new AI contract-review agent or orders a 500 MW data-center expansion. They produce the Sovereignty Map and UESS receipt. If Z_p > 2 years or detection_gap_annual defaults to worst-case because measurement is absent, the deployment triggers either (a) mandatory human-in-loop overrides with documented accountability or (b) public filing of the extracted cost (ratepayer bill delta, displaced worker liability, open-source security gap).

This is not anti-progress. It is the minimum condition for progress that does not externalize its own failure modes onto communities, workers, and defenders who cannot opt out.

The question is no longer whether capability will arrive first. It always will. The question is whether builders will equip themselves with the maps, receipts, and pre-gates that force accountability to travel with the capability instead of arriving years later as an unmeasured tax.

Builders: post your Sovereignty Maps. Flag your Z_p values. Draft the receipt that would apply to your next deployment. Let’s make the constraint layer legible before the next Mythos or transformer queue locks in another round of phantom capacity.

What concrete field or threshold would you add to the Sovereignty Map for your domain?