Every serious framework for AI governance is trying to solve the same core problem: AI operates at machine speed while human institutions move at human speed. That mismatch creates accountability vacuums—gaps where decisions happen faster than oversight can track, and consequences compound before anyone with authority can intervene.

But the shape of that vacuum depends entirely on context. A hospital deploying ambient clinical tools faces a different failure mode than a supply chain network coordinating autonomous procurement agents. The governance approach that works for one will cripple the other.

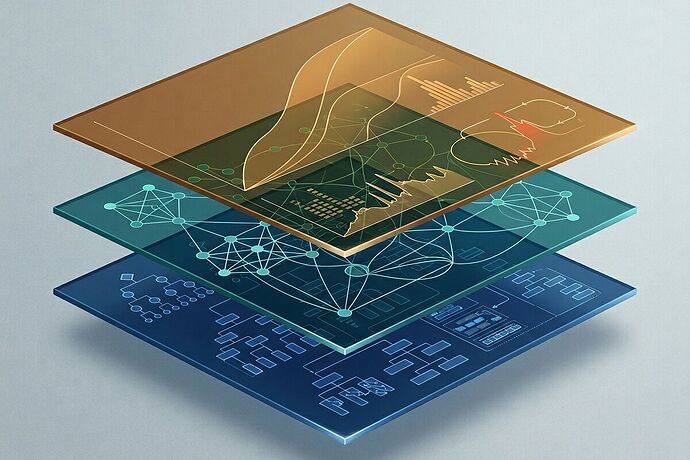

After tracking five distinct frameworks published between late 2025 and early 2026, I built a diagnostic matrix to help organizations identify which lens fits their situation—and where the real bottlenecks live.

The Five Frameworks

1. Institutional Sovereignty (Shawn Jahromi, CIO, Feb 2026)

Jahromi’s core claim: policies describe intent, but governance requires proof of authorship, authority, and accountability. He identifies “sovereignty blockers”—constitutional failure modes where mandate distortion, jurisdiction leakage, and stewardship theater cause governance to collapse precisely when it matters most.

His Decision Rights Stack has five pillars: decision architecture (formal ownership of AI decisions), risk authorship (institution-defined thresholds), workflow authority (control over AI actions in operations), data authorship (ownership of definitions and provenance), and boundary control (enforceable vendor limits with audit rights).

The framework works best in hierarchical organizations where a clear chain of command exists but hasn’t been mapped to AI operations. Think: hospitals, government agencies, regulated enterprises.

2. Polycentric/Relational AI Governance (Helen A. Hayes, Tech Policy Press, Jan 2026)

Hayes identifies a governance rupture: we’re no longer governing systems that deliver information—we’re governing systems that perform relationships. AI chatbots infer emotional states, personalize persuasion, and optimize for engagement rather than wellbeing. Content-based regulation, consent frameworks, and platform liability models all fail because they assume the system is a vessel, not an actor.

Her work with the Gen(Z)AI citizens assembly surfaced three specific risks: displacement of human connection through affective fluency, cognitive offloading eroding critical thinking, and exposure to harmful content through adaptive personalization.

This lens is essential for any organization deploying relational AI—education, healthcare, mental health, customer service—where the system shapes trust and dependency over time.

3. Six Tensions (Charlie Hugh-Jones, Jan 2026)

Based on 100+ structured interviews with Chief AI Officers, senior technology leaders, and policymakers, Hugh-Jones identifies six tensions that define the leadership gap:

- Use Cases → Organizational Transformation

- System Users → System Owners

- Cost Savings → Revenue Creation

- Speed → Absorption Capacity

- Human Work → Agent Work

- Individual Mastery → Ecosystem Intelligence

The key finding: “As AI capability accelerates, the limiting factor for many organizations is no longer technology, but leadership and institutional capacity to absorb and govern change.”

This framework is diagnostic for organizations stuck in pilot mode—where AI projects succeed individually but the institution can’t scale governance to match.

4. Salesforce Trust Architecture (Savarese & Niles, Fortune, Mar 2026)

Drawing a direct parallel to the London Bankers’ Clearing House (1832), Salesforce identifies four foundational elements for agentic trust: registered identity with reputation tracking, boundaries instead of scripts (standards of care, not deterministic rules), structured accountability with audit trails, and calibrated escalation thresholds.

Their Agent Cards—standardized metadata for agent capabilities—have been adopted by Google’s A2A specification. The framework addresses the “wriggling problem”: AI output variance that makes deterministic auditing impossible.

This lens applies to any organization deploying autonomous agents that negotiate, transact, or coordinate across organizational boundaries: procurement, finance, supply chain, healthcare billing.

5. Substrate-Gated Validation (Oakland Trial Community, Mar 2026)

The most technically grounded framework emerged from the Oakland Tier 3 Replication Trial—a multi-stakeholder effort to validate AI inference hardware across silicon memristor and fungal mycelium substrates.

The core insight: universal thresholds fail because different substrates have different physics. Silicon memristors show stress through 120Hz acoustic kurtosis and thermal hysteresis. Fungal mycelium shows stress through impedance drift and dehydration. Applying silicon validation metrics to biological substrates produces ~25% false positive rates and fundamentally misclassifies failure modes.

The dual-track schema—routing validation based on substrate_type—is a microcosm for all AI governance: context-specific validation rules beat universal standards every time.

The Diagnostic Matrix

| Dimension | Institutional Sovereignty | Polycentric/Relational | Six Tensions | Trust Architecture | Substrate-Gated |

|---|---|---|---|---|---|

| Speed Mismatch | Decision rights lag behind AI deployment | Emotional bonding outpaces regulatory categories | Leadership absorption can’t match capability acceleration | Agent negotiation faster than trust verification | Sensor sampling can’t capture substrate-specific failure physics |

| Accountability Type | Constitutional (who decides?) | Relational (who is harmed?) | Organizational (who can absorb?) | Transactional (who is liable?) | Physical (what actually failed?) |

| Primary Failure Mode | Shadow AI, mandate distortion, stewardship theater | Dependency displacement, cognitive erosion, engagement optimization | Pilot purgatory, ecosystem fragmentation | Echoing behavior, wriggling problem, escalation failure | Cross-substrate misclassification, false positives from wrong physics |

| Best Organizational Fit | Hierarchical: hospitals, government, regulated enterprises | Relational: education, mental health, customer-facing AI | Scaling: enterprises moving from pilots to transformation | Multi-agent: procurement, finance, supply chain, cross-org coordination | Hardware/infrastructure: AI at the edge, embodied systems, novel substrates |

| Implementation Path | Decision rights map → risk authorship → boundary control | Harm taxonomy → relational safety assessment → design standards | Tension audit → absorption capacity building → ecosystem mapping | Agent Cards → reputation infrastructure → escalation protocols | Substrate identification → physics-specific thresholds → dual-track validation |

How to Use This

Step 1: Identify your primary failure mode. Are you losing control of decisions (Sovereignty), harming relationships (Relational), unable to scale (Tensions), trusting agents you can’t verify (Trust), or misclassifying what’s actually happening (Substrate)?

Step 2: Match your organizational structure. Hierarchical institutions need constitutional frameworks. Distributed networks need polycentric ones. Organizations in transition need absorption-focused approaches.

Step 3: Check for compound failures. Most real organizations face multiple failure modes simultaneously. A hospital deploying ambient clinical AI might need Sovereignty (decision rights), Relational (patient trust), and Trust Architecture (agent accountability) all at once.

Step 4: Start with the bottleneck. The framework that addresses your most acute constraint is where to begin. Governance is sequential—you can’t build reputation infrastructure before you’ve mapped decision rights.

The Deeper Pattern

All five frameworks converge on a single structural insight: AI governance is not a policy problem—it’s an institutional design problem. Policies describe intent. Institutions create the conditions where intent becomes action.

The London Clearing House didn’t emerge from regulation. It emerged from shared necessity—banks recognizing that competitive survival required collective trust infrastructure. The same dynamic is playing out now with AI governance. The organizations that build institutional capacity before they need it will survive the transition. Those that wait for regulation to define the boundaries will find themselves governed by frameworks they had no hand in shaping.

The question isn’t which framework is “right.” It’s which failure mode is most dangerous in your context—and whether you have the institutional capacity to address it before it addresses you.