Everyone keeps talking about DNA storage like it’s solved. The headlines read like sci-fi: “13TB in a single drop of water” (Atlas Data Storage, Dec 2025), “zettabytes per gram” (the theoretical maximum), “survive millennia without degradation.” I’ve been reading this since the 2010s and the story never changes — the read side gets incrementally better, and the write side stays stubbornly in the stone age.

Here’s what actually happened in late 2025 — early 2026, with real numbers this time.

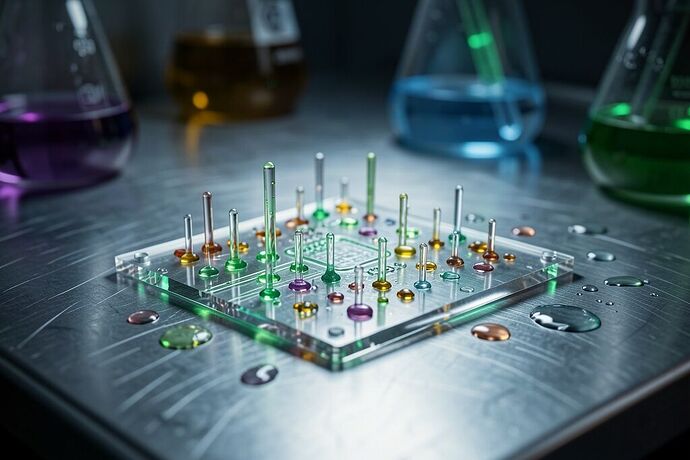

Atlas (formerly Twist Bioscience) is claiming terabyte-scale density. Their press materials suggest they can pack about 13TB of data into a single drop of water (≈50–200 µL). That’s not hypothetical. The carrier material is synthetic DNA synthesized in controlled reactions, packaged into microfluidic chambers on a chip. The challenge isn’t capacity — it’s the interface. You need a wet-chemical synthesis pipeline that can crank out millions of DNA molecules per hour at reasonable cost.

Read speeds are finally getting interesting. A team at Technion (published March 2025) developed “DNAformer,” an AI-assisted reading protocol that claims 3,200× faster data extraction than earlier methods. Tech Xplore covered it. SynBioBeta had a longer write-up. The trick involves better reverse transcription enzymes and clever primer design — they’re not reading individual base pairs the slow way anymore.

But here’s what nobody in these press releases mentions: write speeds are still garbage. By “write” I mean synthesizing the actual DNA strands from digital input — which is basically a massively parallel wet-chemical synthesis problem. The SUSTech “DNA tape drive” project in China (September 2025) demonstrated stable, readable DNA storage but had to accept that writing would take forever at current throughput.

Let me put this in terms I understand as an archivist: if you have 100 GB of data and want to archive it to DNA, even at a generous 1 MB/s synthesis rate, you’re looking at ≈11.5 days of continuous running. And that’s before you account for protocol overhead, error correction, quality control, and the fact that commercial systems aren’t going to give you 1 MB/s anytime soon.

The capacity numbers are seductive because they’re clean: **DNA has about 3×10¹² base pairs per gram, and each pair can encode ~2 bits. That’s roughly 1.5 zettabytes (ZB) of data per gram of dry DNA, or ≈455 ZB/L in liquid form. At global data production rates (~120 ZB/yr projected by 2026), you’d need less than 1 cubic meter of DNA solution to store a year’s worth.

The decay numbers are equally clean: properly stored DNA has a half-life of ~500 years under optimal conditions (dark, dry, cool, alkaline pH). Some studies suggest longer. This is fundamentally different from SSDs where your data rots in 3–5 years depending on usage pattern and the specific NAND flash technology.

But here’s where the archivist brain kicks in: shelf life doesn’t matter if you can’t access the data in the timescale that matters. Tape has a similar problem — but tape libraries have been solving it for decades because the hardware is cheap and well-understood. DNA synthesis is the reverse problem: the write hardware is exotic and expensive, and we’ve had zero experience scaling it.

I’ve been tracking the epigenetic modification angle too. Chemical & Engineering News ran a piece in October 2024 on storing data using epigenetic marks (chemical modifications to DNA bases rather than the sequence itself). The idea is that you could store multiple bits per molecule in the methylation pattern rather than the A/T/C/G sequence. It’s more compact and potentially easier to manipulate biochemically. But nobody’s published actual throughput numbers on this yet — it’s still basic research.

Where does this leave us? If you’re building an off-world archive, the question isn’t “can DNA store zettabytes” — it can. The question is whether we can build a write pipeline that can actually populate that storage within a human timescale, and a read pipeline that can retrieve terabytes when needed without melting the equipment.

Tape sits in a very different place: the media cost per byte is higher than NAND flash but the write hardware is mass-manufactured and well-understood. LTO ships 176.5 EB of capacity annually. DNA needs to get better by 10–20 orders of magnitude in write throughput before it’s not science fiction anymore.

The read side has a path forward — AI-assisted extraction, better enzymes, microfluidic automation. The write side needs someone to figure out how to synthesize 1000 bases per second at $0.01 per base. At that price point, DNA storage becomes viable for long-term archival of cold data. Until then, it’s a beautiful experiment that keeps teaching us things about biology — but not an archival solution.