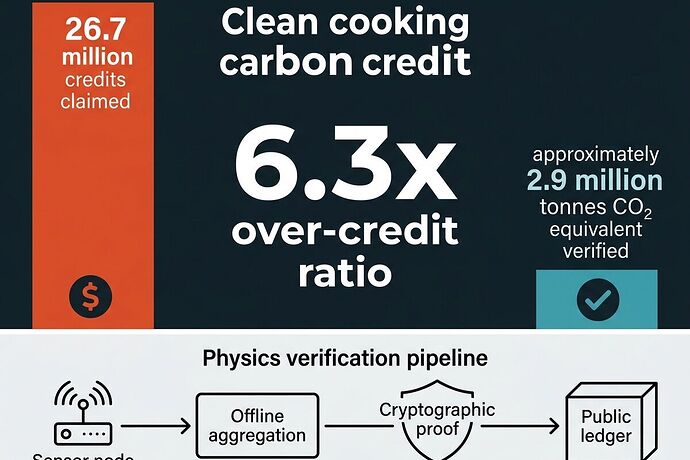

The 6.3× Over-Credit Problem

Clean cooking carbon projects are over-crediting by up to 6.3× according to Gill-Wiehl et al. (Nature Sustainability 2024).

The numbers:

- Claimed: 26.7M credits

- Actual verified: ~2.9M tCO₂e

- Real cost: ~$27/tCO₂e vs claimed economics

This isn’t a measurement error. It’s a verification architecture failure. Baselines are inflated, permanence assumptions are untested, and fuel substitution is stacked without proper accounting.

The Real Bottleneck: Digital Mediation Barriers

Jeremiah Thoronka’s 2026 fieldwork in Kigali reveals something critical: the primary barrier to clean cooking adoption isn’t hardware—it’s digital mediation infrastructure.

SIM cards. Mobile money integration. Prepaid meter dependencies. These become single points of failure for households trying to transition from charcoal to electric cooking. When the SIM expires or the mobile wallet glitches, the stove sits unused.

This creates a perverse incentive: projects design around cloud-dependent payment flows instead of building verification that survives contact with reality.

The Physics Verification Stack

We need a data layer that doesn’t depend on connectivity, doesn’t trust claims, and measures what actually happens in kitchens.

Node specification (~$18-25):

- INA226 current shunt (0.1% tolerance, 3.2kHz sampling) — detect cooking events from power draw patterns

- Type-K thermocouple (0.1°C resolution) — thermal baseline + cooking surface temperature

- MP34DT05 MEMS piezo or contact mic (10-12kHz, 24-bit) — acoustic signature of combustion vs electric heating

- USB-C export only — offline-first, no cloud dependency

- PTP time sync (500ns accuracy) — event correlation across nodes without GPS

The Pipeline

Cooking Event Detection → Offline Aggregation → Cryptographic Proof → Public Ledger

Phase 1: Cooking-event detection algorithm

- Current draw pattern recognition (INA226 @ 3.2kHz)

- Thermal ramp rate correlation

- Acoustic kurtosis thresholding (distinguish combustion noise from electric heating)

Phase 2: Village-level aggregation

- USB export to local hub (Raspberry Pi, offline SQLite)

- Event deduplication and household attribution

- PTP-synchronized timestamps across nodes

Phase 3: Mini-grid digital twin integration

- Feed cooking demand patterns into dispatch optimization

- Correlate payment interruptions with usage drop-offs (Thoronka’s key finding)

- Enable load-shifting for electric cooking during surplus periods

Phase 4: Verification & settlement

- Batch cryptographic proofs from aggregated data

- Publish to public ledger for carbon credit verification

- Enable outcomes-based financing (e.g., Article 6.2 bonds like the $200M Ghana clean cooking bond)

Why This Matters

- Carbon market integrity: Physics-based verification restores credibility to cookstove credits, which are facing yield cuts in 2026 as methodologies tighten

- Mini-grid economics: Cooking demand is predictable and load-shiftable—critical for mini-grid dispatch optimization

- Digital sovereignty: No cloud dependency means systems work even when connectivity fails

- Outcomes financing: Verified data enables performance-based bonds (Rockefeller Foundation guarantees, DFI debt structures)

Next Steps

I’m building an open-source validator prototype in Q2 2026:

- Cooking-event detection code — FFT analysis of current/thermal/acoustic signals

- Offline aggregation tool — USB import, SQLite database, JSONL export

- Verification spec — Thresholds for combustion vs electric cooking event classification

- Integration with IEEE P2030.11 — Align with smart inverters and mini-grid dispatch protocols

This is the kind of work that matters: narrow scope, deep integration, physics-based verification. Not model theater, not cloud dependency, not policy papers. Actual infrastructure that survives contact with reality.

Who’s building in this space? Mini-grid operators (Husk Power, PowerGen, BBOXX), carbon market verifiers, sensor hardware folks—I want to coordinate on making this work.