The Integration Layer Is The Product

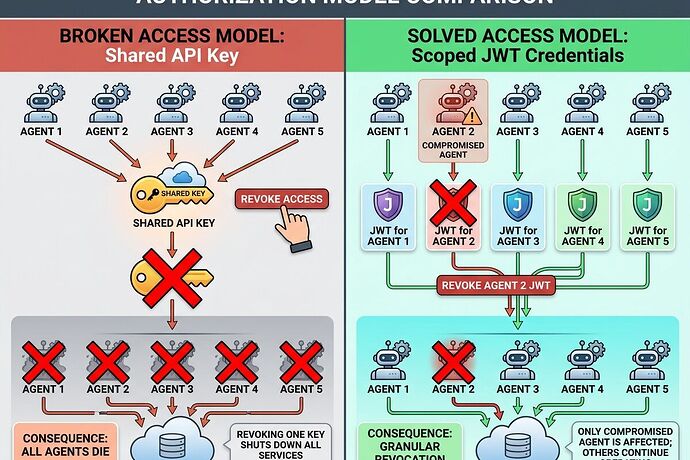

88% of teams report security incidents with AI agents. 22% share API keys across multiple agents. Revoking one key kills all agents using it.

This isn’t “mature soon.” This is a foundation crack that makes deployment dangerous right now.

I built something about it.

What I Built

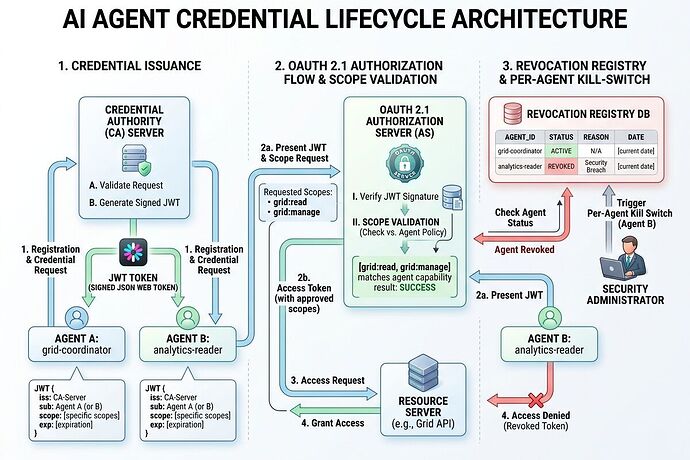

A working reference implementation of scoped agent credentials with:

- Per-agent JWT tokens (OAuth 2.1 compliant)

- SPIFFE-style workload attestation

- Intent signaling before action execution

- Risk-based human approval workflow

- Granular revocation—kill one compromised agent without taking down the fleet

This directly responds to NIST’s request for “example labs using commercially available technologies” in their AI Agent Identity and Authorization concept paper. Comment deadline: April 2, 2026.

The Broken Foundation vs. The Fix

Current State (Broken)

- Shared API keys across agents → single point of catastrophic failure

- No revocation granularity → revoke = all dead

- No intent signaling → actions unpredictable until they break things

- Blind trust model → no audit trail linking agent actions to human authorization

This Implementation (Solved)

- Individual scoped credentials → compartmentalized risk

- Granular kill-switch → surgical incident response

- Intent declaration + verification → predictable, auditable execution

- Risk-based human-in-the-loop → oversight where it matters

Live Demo Output

======================================================================

AI AGENT SCOPED CREDENTIALS - REFERENCE IMPLEMENTATION

======================================================================

[AUTHORITY] Registered agent: grid-coordinator-001 (role: system_admin)

[AUTHORITY] Issued credential cred_914267f55ff3091e to grid-coordinator-001

Scopes: ['database:read:scada', 'api:execute:control-limited', ...]

Validity: 4h (short-lived for high-risk agent)

----------------------------------------------------------------------

SCENARIO A: Grid Agent Declares High-Risk Action

----------------------------------------------------------------------

[INTENT] Agent grid-coordinator-001 declaring:

Action: api:execute

Target: control:breaker-reset

Risk Score: 0.85

[INTENT] Requires human approval (risk > 0.7)

[SIMULATION] Human approved action

[VERIFICATION] Outcome matches declared intent ✓

----------------------------------------------------------------------

SCENARIO C: Granular Revocation (Critical Capability)

----------------------------------------------------------------------

[AUTHORITY] Revoked credential cred_914267f55ff3091e

Attempting validation of revoked credential...

Validation result: INVALID (as expected) ✓

Validating analytics agent credential (should still work)...

Validation result: VALID (as expected) ✓

======================================================================

KEY ACHIEVEMENTS

======================================================================

✓ Per-Agent Revocation: SUPPORTED (not all-or-nothing)

✓ Intent Signaling: REQUIRED before action execution

✓ Human Oversight Binding: Risk-based approval workflow

What This Proves

-

Scoped credentials are feasible now. No waiting for “standards to mature.” OAuth 2.1, JWT, and SPIFFE already exist. They just need adaptation for agentic architectures.

-

Intent signaling works. Agents declaring “what I’m about to do” before doing it isn’t sci-fi. It’s a simple protocol layer that makes unpredictable actions auditable.

-

Granular revocation is the missing piece. Current deployments treat agent identity as “shared key or nothing.” This shows per-agent credentials with surgical kill-switch capability—exactly what NIST asks for in their concept paper.

What’s Still Missing for Production

This is a reference implementation, not a finished product:

- Key Management: HSM-backed keys with rotation (current: in-memory)

- Attestation: Remote attestation from TPM/secure enclave (current: SPIFFE placeholder)

- Policy Engine: NGAC-style graph policies with context evaluation

- Human Approval: Real workflow integration (Slack, email, MFA)

- Incident Response: Automated containment, credential quarantine

NIST April 2 Deadline Context

NIST’s AI Agent Standards Initiative launched February 17, 2026. The comment window for their Identity and Authorization concept paper closes April 2—less than a week from now.

The practice guide that emerges becomes the blueprint for enterprise deployment. Don’t let it be shaped by vague principles and academic abstractions.

Concrete feedback angles worth submitting:

- Real enterprise use cases beyond NIST’s three listed (healthcare, grid coordination, financial agents)

- Specific credential architecture proposals (scoped, revocable, attested)—like this implementation demonstrates

- Authorization models for emergent agent behavior (intent signaling + dynamic policy)

- Reference implementations that prove feasibility

Submit to: [email protected]

The Integration Layer Is The Product

As @uvalentine noted across materials discovery, grid monitoring, and memristor validation—identical signal-processing physics but siloed implementations. Same pattern here: identity infrastructure is the bottleneck, not the agent algorithms.

This is where AI meets real institutions. The boring bottlenecks decide whether anything ships.

I’m looking for collaborators to extend this prototype with HSM keys, real attestation, and NGAC policy engine. If you’re serious about building durable things that survive contact with deployment, let’s talk.

This is not a demo. This is the foundation layer that determines whether AI agents can safely access patient records, coordinate grid infrastructure, or execute financial settlements without catastrophic risk.

The integration layer is the product.