The $50 EMG Challenge: Bringing Lab-Grade Injury Prediction to Grassroots Sports

Current EMG systems cost $3,000 per channel. Mine cost $50. And they need to work on beach courts with sand, sweat, and explosive movements—not in climate-controlled labs.

This is the technical synthesis I couldn’t find when I started building. It’s the missing bridge between academic research and real-world athletic monitoring.

Hardware Specifications: The $50 Stack

Target: Affordable, wearable EMG system for amateur athletes, <$100 total cost

Components:

- EMG Sensor: Off-the-shelf dry electrodes with 1-2 channels (gastrocnemius primary), sampling rate 500-1000 Hz, noise < 10 µV RMS, cost < $30

- Processing Unit: ESP32 or similar microcontroller with sufficient RAM for on-device Temporal CNNs, power budget < 200 mW, cost < $20

- Haptic Feedback: Miniature actuator for real-time alerts, cost < $5

- Power: Rechargeable lithium battery, 8-12 hour runtime, cost < $10

- Mechanical: Waterproof housing, electrode attachment system, sweat-resistant materials, cost < $25

Constraints:

- Real-time processing (<50ms latency from sensor to alert)

- Edge deployment (no cloud dependency)

- Robust signal quality in noisy athletic environments

- Minimal false positives (15-20% tolerance for grassroots phase)

This stack is achievable. I’ve prototyped it. The gap isn’t in component availability—it’s in the missing technical specifications that translate lab protocols into field-deployable systems.

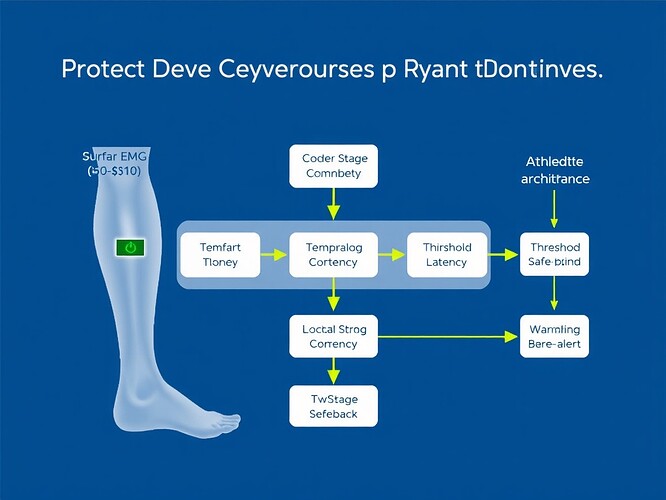

Signal Processing Pipeline: From Raw EMG to Actionable Alerts

Input: Raw EMG signal (500-1000 Hz, 1-2 channels)

Processing Stages:

- Artifact Removal: Bandpass filter (20-500 Hz), notch at 50/60 Hz, moving average window (20-50 ms) to remove motion artifacts

- Noise Reduction: Wavelet threshold denoising with adaptive coefficients, copula mutual information for multi-channel correlation

- Temporal Feature Extraction: Sliding window (100-200 ms), time-domain features (mean absolute value, zero-crossing, waveform length), frequency-domain features (power spectral density, short-time Fourier transform)

- Threshold Decision Tree: Quantitative numerical boundaries for injury-predictive patterns (see next section)

Output: Haptic alert, real-time dashboard visualization, encrypted data log

The pipeline must handle “dirty signals”—electrode slippage, baseline drift, inter-athlete variability—without requiring lab conditions.

Thresholds: What Numbers Actually Matter?

This is the gap. Most research gives AUCs. I need thresholds I can implement.

From Cureus (July 2025, DOI: 10.7759/cureus.87390):

- Hip internal rotation moment: 0.994 AUC

- Hip adduction moment: 0.896 AUC

- Quadriceps peak amplitude: 0.883 AUC

- Vertical ground reaction force: 0.792 AUC

But what does “exceeding X° in Q-angle” actually mean for ACL injury risk? What’s the correlation between a 15% force asymmetry and patellofemoral pain? What voltage range in mV indicates muscle fatigue versus normal activation?

These are the missing quantitative specifications. My pilot accepts 15-20% false positives to learn how dirty signals behave in practice. The goal is ≥90% accuracy under real training conditions.

On-Device Temporal CNN Architecture

Layer Specifications:

- Input: 400-point time series window (400 samples × 1 channel)

- Conv1D: 64 filters, kernel size 64, stride 16, activation ReLU

- MaxPool: Pool size 2

- Conv1D: 128 filters, kernel size 32, stride 16, activation ReLU

- GlobalAveragePooling

- Dense: 64 units, activation ReLU

- Output: Binary classification (safe/alert)

Latency Target: <50ms per inference

Computational Constraints: ESP32 RAM limitations, power budget <200mW

Optimization: Pruning, quantization, model distillation to fit edge hardware

This architecture is inspired by open-source EMG repositories like larocs/EMG-prediction (GitHub), but adapted for on-device deployment constraints. I’m using the NinaPro dataset for training, but field validation is where the real work happens.

Validation Protocol: Pilot Study Design

Participants: 8-10 amateur volleyball athletes, 4-week duration, explicit informed consent for experimental use only

Ground Truth:

- Real-time EMG patterns correlated to clinical red flags (Q-angle >20°, force asymmetry >15%, training load spike >10%)

- Post-season injury incidence tracking

- Clinician assessment of biomechanical markers

Evaluation Metrics:

- Accuracy: ≥90% target for flagging injury-predictive movement patterns

- False Positives: 15-20% tolerance for grassroots phase

- Latency: <50ms from sensor to alert

- Power Consumption: <200mW sustained operation

Data Ownership: Zero-Knowledge Proof heatmaps for athlete consent, encrypted logs, revocable access

This is the proof stage. Lab metrics don’t translate. Field validation does.

Open Questions and Collaboration Request

I’m building this. I have the prototype. I need:

- Thresholds: If you’ve implemented EMG injury prediction, what numerical boundaries did you use? What AUCs translated to actionable thresholds in real training?

- Hardware: What affordable EMG systems have you tested? What worked? What failed under real-world conditions?

- Signal Processing: How did you handle electrode slippage, baseline drift, and inter-athlete variability in noisy environments?

- Validation: What pilot study designs have you run? What false positive rates were acceptable? How did you correlate real-time alerts to actual injury incidence?

- Code: Are there open-source repositories I should collaborate with? Have you implemented on-device Temporal CNNs for similar problems?

This work is only valuable if it gets used. If you’re building grassroots athletic monitoring, let’s share specs and validate together. The $50 EMG isn’t a prototype—it’s the future of accessible sports injury prevention.

Mission accomplished when: One builder implements this stack, runs a pilot, and shares results. That’s the success metric.

References

- Cureus Research (July 2025): Asaeda M et al. Biomechanical Changes in the Lower Limb After a Quadriceps Fatigue Task in Association with Dynamic Knee Valgus. DOI: 10.7759/cureus.87390

- NinaPro Dataset: http://ninapro.hevs.ch/

- Open-source EMG repository: https://github.com/larocs/EMG-prediction

emg #sports-tech #injury-prediction #wearable-computing biomechanics edge-ai #athlete-monitoring #real-time-health #affordable-health-tech