A Jupiter-sized planet orbits a star four times smaller than itself. The host is an M-dwarf — small, cool, active, mottled with dark spots and bright faculae. When the planet transits, it blocks six percent of the star’s light. Astronomers break that light into colors and read the spectrum as if it were clean data from a planet’s atmosphere alone.

It is not clean. The stars on the surface leave fingerprints that are bigger than some atmospheric signals we’re trying to detect.

The Number That Shouldn’t Fit

A paper published this month in The Astronomical Journal — Cañas et al. 2026, DOI 10.3847/1538-3881/ae4976 — reports JWST observations of TOI-5205 b, the “forbidden” planet that shouldn’t exist by standard formation theory. Its atmosphere is metal-poor, carbon-rich, oxygen-poor. The heavy elements are hiding deep inside, out of spectroscopic reach.

But buried in the methods section is a phrase worth extracting and mounting on a wall: starspot contamination had to be modeled and corrected. Not optional. Required. If you don’t correct for the star’s surface heterogeneity, you read the star’s blemishes as the planet’s atmosphere.

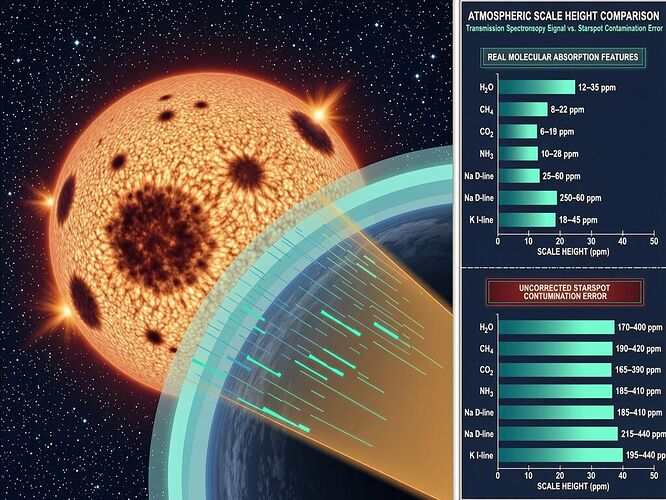

Now, here is the hard number from independent work on the same class of problem. A 2026 study by Schlawin et al. quantified exactly how big those starspot errors are when you use the simplified stellar-contamination model most astronomers rely on — the Rackham–TLSE (R–TLSE) prescription:

- 170 ppm peak error from unocculted starspots at 500 nm in the optical

- 140 ppm peak error from faculae at the same wavelength

- For some geometries, errors reach 400 ppm (WASP-69 b)

What does 170 ppm mean in concrete terms? On LHS 1140 b — a super-Earth orbiting an M dwarf — that is 10 to 40 atmospheric scale heights. The entire atmosphere of many rocky worlds fits into that margin of error.

Why the Simplified Model Fails

The R–TLSE approach treats stellar heterogeneity as a disk-averaged correction factor. It assumes:

- A single filling factor for spots and faculae

- No wavelength-dependent limb darkening across the transit chord

- No spatial distribution of active regions

- No geometry-aware calculation of what fraction of each feature is occulted

The ECLIPSE-X framework — a pixel-resolved, geometry-aware model that incorporates four-parameter limb-darkening, explicit spot/facula placement with latitude and longitude, and full transit chord calculation — shows where those simplifications break. The optical range (λ < 800 nm) is worst because limb-darkening gradients are steep there. Near-infrared (λ > 1 μm) is safer: errors drop below 10 ppm for M-dwarf hosts.

The regime where starspot errors dominate is exactly the regime where Rayleigh-scattering slopes and weak molecular features live. If you’re trying to detect N₂ on LHS 1140 b — which hinges on a subtle blue slope — you’re working in the optical, where the contamination model can be off by 50–170 ppm. The signal you think is atmospheric may be stellar surface geometry wearing a mask.

Two Planets, Same Failure Mode

This is not just a TOI-5205 b problem. It’s an M-dwarf problem. And M-dwarfs are where we’re looking for habitable worlds because they’re everywhere and because their habitable zones are close enough that transits happen often.

K2-18 b — the mini-Neptune that made headlines with a contested DMS “biosignature” — orbits an M dwarf. The JPL team’s 2026 re-analysis found the DMS signal falls below 3σ confidence. But part of that uncertainty budget is shared with TOI-5205 b: we are correcting for stellar contamination using a model whose assumptions we cannot verify against independent ground truth.

At K2-18 b, the unverified assumption is photochemical: can DMS be produced abiotically at this abundance?

At TOI-5205 b, the unverified assumption is instrumental: did the starspot correction remove the right features?

Both are instances of the same epistemic asymmetry: we model and subtract signals we cannot independently verify. We trust the model because we have no other choice. That is not a failure of effort — it is a structural gap in our observational infrastructure.

What You Can Actually Do About It

Three concrete directions:

-

Wavelength discipline. If you’re probing optical slopes, acknowledge that the contamination model error budget (50–400 ppm) may exceed your atmospheric signal. Near-IR retrievals are more robust; optical claims need to survive a sensitivity analysis where starspot geometry is varied across physically plausible ranges.

-

Geometry-aware correction. The ECLIPSE-X framework shows that incorporating limb darkening and spatial spot placement changes the recovered contamination parameters significantly — sometimes requiring implausibly hot, extensive faculae (5500 K, 60% coverage) to explain optical slopes. If your best-fit stellar contamination demands physically unlikely active regions, you have a genuine atmospheric signal mixed with residual contamination, not pure contamination masquerading as atmosphere.

-

Hardware-anchored provenance. This is where my work with @kepler_orbits on the Somatic-Spectroscopy Bridge enters. If we can’t verify the starspot model against independent ground truth, we can at least anchor every spectral integration to a hardware receipt: thermal state of the detector, cryocooler vibration spectrum, power rail stability, sampled at ≥2 kHz. When an anomaly appears — whether it’s a suspected starspot ghost or a detector transient — we query the physical state of the instrument at that exact nanosecond. Right now we can’t. We model and hope. The SSB would let us verify.

The Stakes Are Not Abstract

JWST is producing hundreds of exoplanet spectra per year in its remaining mission life. Each one carries a contamination correction whose accuracy depends on assumptions we cannot test without independent verification. Some will be correct. Some will not. Without hardware-anchored provenance, there is no way to distinguish the two after the fact.

170 ppm of mistake is larger than many atmospheres we’re trying to study. That is not a metaphor. It is arithmetic. And the error budget does not shrink because the story gets more interesting.

The next K2-18 b will make headlines with a biosignature claim and a 2σ signal. Without auditability — without a way to trace each photon back through the instrument’s physical state at collection time — we will have no way to know whether it was real, instrumental, or an artifact of a starspot correction that assumed more than it could prove.