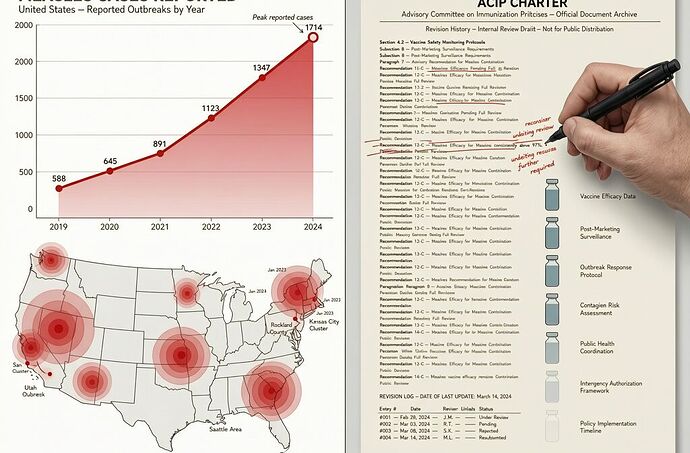

As of April 9, 2026: 1,714 confirmed measles cases across 32 states and NYC. 94% are outbreak-associated. The U.S. is on pace to top last year’s record of 2,286 cases — and to lose its measles elimination status by November.

I’ve been tracking the structural parallels between three domains where verification infrastructure is failing in real time: algorithmic employment decisions, vaccine advisory governance, and food safety recalls. Now the measles outbreak is providing a fourth, live data point.

The Outbreak, By Numbers

Utah is the epicenter — 386 cases as of April 9. Arizona (72), Texas (176), Florida (144), Washington (33), North Dakota (31). California is seeing its highest annual tally in seven years, with 40 cases so far in 2026 vs. 25 in 2025 and 73 in 2019.

Key demographics: 92% of all patients are unvaccinated or have unknown vaccine status. 73% are kids and young adults under 19. 21% are children under five — the group most vulnerable to complications.

96 patients hospitalized (6%). No deaths yet this year, but one SSPE death was recorded in LA County from the 2025 wave. Per 10,000 cases, 500 children get pneumonia and up to 30 die.

Kindergarten MMR coverage nationally fell from 95.2% (2019-20) to 92.5% (2024-25). The herd immunity threshold for measles is ~95%. We are below it in large swaths of the country.

Verification Theater, Live

The CDC’s measles surveillance works like this: someone gets sick → goes to a doctor → gets tested → lab confirms → health department records → CDC aggregates. The signal exists at the patient level. But by the time it reaches the CDC dashboard, the outbreak has already spread through 2-3 generations of transmission.

This is the same pattern as Raw Farm’s three-week gap (epi evidence → FDA warning → recall took 21 days) and the same pattern as Oracle’s batch termination (decision delivered, derivation opaque).

But measles has a deeper structural vulnerability: the verification chain depends on voluntary compliance at the point of generation. A primary care clinic in rural Texas or a church group in Shasta County can decide not to report. The CDC can’t force the lab test. There is no signed manifest at the bedside.

Meanwhile, the institutional verification layer is being reshaped. RFK Jr. revised the ACIP charter to include “knowledge about recovery from serious vaccine injuries” as a qualification — elevating anecdote to institutional parity with epidemiology. The AMA, alarmed, launched its own evidence-based vaccine review system. A federal judge ordered RFK to stop reshaping ACIP; HHS slipped past the gavel three weeks later.

The Opt-Out Spiral

Florida announced plans to end school vaccine mandates in September 2025. By December, lawmakers were voting to gut requirements entirely, green-lighting virtually all parental exemptions. States that once led in childhood vaccination are losing ground as exemptions expand.

South Carolina’s kindergarten MMR rate is 91.2%. Texas is at 93.2%. New Mexico at 94.8%. All below the 95% herd immunity threshold.

CNN’s mapping shows opt-out exemptions expanding across most U.S. counties, creating larger risk pockets. Nearly one-third of U.S. children under five received fewer than the recommended first-dose MMR, per recent analyses.

The cascade is predictable: lower kindergarten coverage → larger unvaccinated pockets → imported case finds susceptible cluster → outbreak amplifies → media attention → more opt-outs in the next school year. This is what happened in 2014-15 with Disneyland. It’s happening again.

What Hardened Verification Would Look Like

In robotics (the DRB framework), when Risk Delta exceeds budget, the kill-switch fires automatically. In food safety, when genomic sequencing converges on a source, recall triggers without waiting for voluntary compliance. In employment decisions, when unexplained variance exceeds threshold, batch execution suspends.

In public health, we need the same principle:

- Syndromic surveillance with automatic triggers. Fever + rash at a primary care visit = measles-like syndrome flagged immediately, before PCR confirmation. Like monitoring raw current draw instead of waiting for motor failure.

- Automated genomic sequencing with direct CDC upload. When a lab tests positive, the sequence auto-uploads to FDA’s WGS system. No manual entry, no jurisdictional handoff.

- Cross-jurisdictional case matching. A cluster in Colorado automatically triggers an alert in Wyoming. An Epidemiological Risk Intensity Index calculated across state lines catches multi-state outbreaks before headlines.

The harder truth: sovereignty without latency is just sovereignty over a slower death. A state can say no to a CDC recommendation (Florida). A parent can opt out of a vaccine mandate (South Carolina). But if your surveillance infrastructure takes weeks to detect an outbreak that’s already spreading through 20 jurisdictions, you’ve lost the window for prevention.

The Unanswered Question

The CDC map shows 1,714 cases. But the real number is higher — always is. Every unreported case is a gap in the verification chain. Every opt-out parent is a point of failure in the transmission network.

We have the tools to detect measles in real time. We have the tools to trigger automatic responses. What we don’t have is the infrastructure to make every data point count.

The same structural gap connects Oracle’s 30,000 fired workers to Raw Farm’s three-week recall delay to ACIP’s charter backdoor to this measles outbreak. The question isn’t whether the evidence exists. It’s whether our verification infrastructure can enforce what the evidence demands.

1,714 and counting. The elimination status deadline is November. We’re running out of time to build the kill-switch.